notes on Linguistics

stuff on languages and what not

On the Nature and Scope of Linguistics

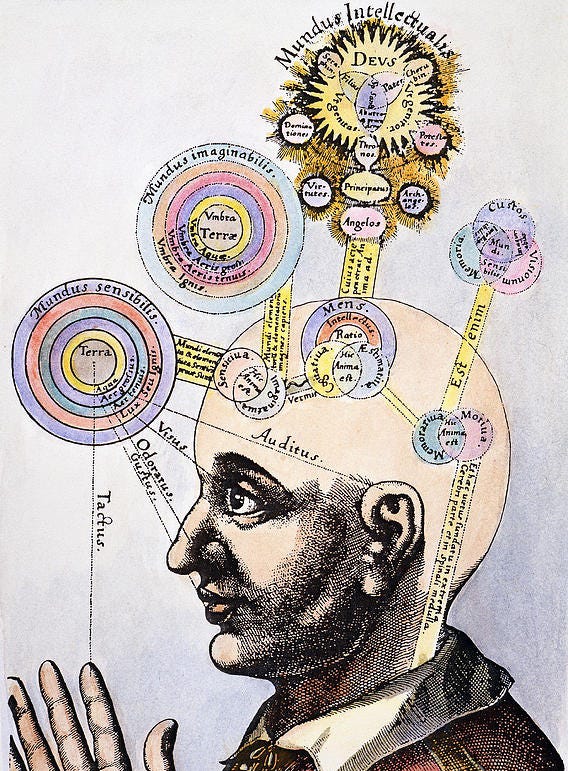

Language pervades every sphere of human life: the carved inscriptions of antiquity, the fleeting exchanges of the present, the solemn cadence of scripture, the ordinary speech of markets. It is the vessel through which thought is shaped, preserved, and renewed. Spoken, written, or signed, it manifests an inward faculty that is at once universal and particular—formed by necessity, yet bound by convention. The study of this faculty—its structures, principles, and functions—is the province of linguistics.

Linguistics is not a mere catalog of words nor a record of their histories, but an inquiry into the laws by which language operates, its origins, and its influence upon thought and society. It asks how a single human capacity underlies the multitude of tongues, and how, despite each person’s unique use of speech, discernible patterns govern its practice.

The philosopher’s parable of the traveler who hears a native exclaim gavagai upon the sight of a rabbit reveals the central challenge: words never announce their meanings. They may denote an object, an action, or nothing more than an exclamation. Meaning is inferred, not given, and the interpretation of language depends upon context, practice, and convention.

Several properties distinguish language from other forms of communication. Its basic units—sounds, signs, or symbols—are meaningless in isolation yet infinitely productive in combination, a duality that allows boundless expression from finite means. The bond between word and referent is arbitrary: the creature called rabbit in one tongue is conejo in another. Language also transcends immediacy; it speaks of past and future, of the absent and the imagined. It reflects upon itself, granting us the very means by which we inquire into its nature.

This sets language apart from the signaling of animals. The bee’s dance, precise though it may be, conveys only the location of nectar; the parrot mimics without comprehension; the hound’s wagging tail communicates state but not speculation. Human language, by contrast, is an invention of thought itself: it binds communities, records civilizations, and preserves knowledge.

Despite its infinite variety—over seven thousand tongues, each with countless dialects—language reveals common structures. Its smallest sounds and gestures fall under phonetics; their patterned arrangements under phonology. Words, far from indivisible, are built from meaningful units (morphology), then combined by rules of syntax into sentences. Meaning, in words and discourse alike, is the realm of semantics and pragmatics. Linguists study these levels not in isolation, but in their relation to mind, society, and history, employing observation, experiment, and introspection alike.

The aim of linguistics is not to enforce correctness—no more than the naturalist would scorn a sparrow for failing to sing like a parrot—but to understand the diversity and unity of human speech. Its applications reach far: in medicine, where speech disorders are treated; in technology, where machines are taught to comprehend words; in law, where texts are interpreted; in education, literature, and journalism—everywhere that mastery of language is essential.

To study language is to study mankind. It is the bridge between minds, the medium of thought and tradition, the record of civilization and the condition of its perpetuation. Linguistics, therefore, is not simply the study of words, but of the very fabric of human existence.

On Morphemes and the Structure of Words

In the study of language, the word may be understood broadly as a unit of meaning preserved in the lexicon, or more narrowly as a structure composed of smaller parts. Lexicographers record lexemes—the largest units of unpredictable sense. Thus rabbit hole merits entry, for its meaning cannot be inferred from its components, while deep hole does not, since its meaning follows directly from deep and hole.

Closer analysis reveals that many words are not indivisible. In falling down rabbit holes, one may distinguish fall, -ing, down, rabbit, hole, and -s. Though -ing and -s cannot stand alone, they contribute meaning: plurality, aspect, or relation. Such elements are morphemes, the smallest units of form and meaning. The study of their patterns is morphology, from the Greek morphē—“form”—for morphemes give structure to words.

Morphemes fall into two classes. Free morphemes, like rabbit or hole, can appear independently, while bound morphemes, like -s or -ing, require attachment. Their combination yields compounds (doghouse, rabbit-hole) or affixed forms (untwistable, where un- negates, twist provides the base, and -able conveys possibility). Roots often coincide with free morphemes, though some, such as -ceive in receive or conceive, are bound and meaningful only in compounds.

Languages employ diverse morphological strategies. English relies chiefly on affixation, with prefixes, suffixes, and occasional infixes, while others use circumfixes, as in Malay. Some languages alter meaning through internal changes: foot/feet, or the Semitic pattern where consonantal roots combine with shifting vowels to yield related words (book, writer, office). Still rarer is suppletion, where one form wholly replaces another, as in go/went.

Morphology also varies in degree of fusion. In isolating languages such as Mandarin, each morpheme is a separate word, while in polysynthetic languages like Murrinhpatha, an entire sentence may be expressed as a single complex word. Fusional languages blend multiple meanings into a single form, as in French -aux, which simultaneously marks plural and gender.

On the Structure of Sentences and the Interplay of Form and Meaning

All languages devise means to distinguish the relations of words in a sentence, and the science of syntax concerns itself with these arrangements. One such means is fixed word order. In English, Portuguese, Nahuatl, and Malagasy, the sequence follows subject–verb–object, while in Hindi, Czech, and Korean the object precedes the verb, and in Irish, Hawaiian, and Māori the verb itself comes first.

Another means is morphology: the affixation of morphemes that mark a noun’s role. In Latin, for example, word order may remain unchanged while endings determine meaning: hospes leporem videt (“the host sees the rabbit”) contrasts with hospitem lepus videt (“the rabbit sees the host”). Such freedom permits Latin, like Turkish, Greek, and Yupik, to use sequence for emphasis or style rather than necessity.

Signed languages also express these relations. In American Sign Language, spatial reference allows the motion of a sign to indicate subject and object. English once relied more heavily on morphology for the same purpose, but now word order and remnants of inflection—such as in I see them—work together to mark roles. When these systems clash, as in me see they, the result feels ungrammatical.

Yet grammaticality is not the same as sense. Noam Chomsky’s famous example—Colorless green ideas sleep furiously—is grammatically sound but semantically absurd. By contrast, Furiously sleep ideas green colorless is both ungrammatical and nonsensical. That speakers effortlessly recognize this distinction without instruction testifies to an innate grasp of grammar.

Grammar, however, is not fixed by prescriptive rules. Dialects reveal alternate systems, as in Don’t nobody know nothing, which follows a consistent pattern though it diverges from the standard. Each speaker commands an idiolect—an individual grammar shaped by experience—so intuitions may differ subtly across communities.

To uncover sentence structure, linguists use tests that reveal constituents, the units of syntax. The substitution test shows that in Taylor sees the rabbit, both Taylor and the rabbit may be replaced by longer phrases or pronouns, confirming their integrity as constituents. The cleft test recasts sentences as It is X that Y, demonstrating that It is Taylor who sees the rabbit and It is the rabbit that Taylor sees are valid, while It is sees the rabbit that Taylor is not. Such analysis reveals that verbs and their objects form a tighter bond than subjects and verbs, a fact central to understanding English syntax.

Languages differ in how these constituents are revealed. English relies heavily on word order, while in Latin or Turkish they may be scattered, bound together not by sequence but by shared affixes.

The Branching Structure of Sentences: An Examination of Syntactic Trees

Language demands structure, for without order, meaning falters. To uncover this order, linguists seek not merely to list words, but to reveal the hidden bonds that join them. Early methods—circling words or enclosing them in brackets—proved insufficient. Hence the syntactic tree was devised: a branching diagram where each node represents a constituent, showing how words unite to form phrases and sentences.

A phrase, larger than a word yet smaller than a sentence, may be named by its head. Thus, “the rabbit” is a noun phrase (NP), while “sees the rabbit” is a verb phrase (VP). Determiners such as the or a anchor nouns, and prepositions such as on or with introduce prepositional phrases (PP), binding nouns to the sentence. By convention, linguists abbreviate these roles—NP, VP, PP—to clarify the underlying order.

Though languages differ in word order, syntactic trees reveal a deeper consistency: verbs and their objects form a natural bond. Whether in English, Latin, or Japanese, this relation persists, altered only in surface arrangement. In English, sentences divide into subject and predicate, each open to substitution. “Taylor sees the rabbit” may become “The rabbit ate cake,” yet the pattern endures: a noun phrase followed by a verb phrase. Wrong divisions—“Gavagai ate rabbit ate cake”—betray the structure, producing ungrammatical forms.

Within these patterns, heads govern complements. A noun phrase centers on a noun, a verb phrase on a verb, a prepositional phrase on a preposition. Complexity arises through expansion: “The rabbit with a scarf hopped” or “Gavagai ate cake on the moon” extend simple rules without breaking them. Such growth reflects recursion, whereby structures contain smaller versions of themselves. A chain of prepositional phrases—“The rabbit on the moon in the solar system in the Milky Way…”—demonstrates this potentially infinite embedding.

Yet structure alone does not resolve all ambiguities. Words of identical form may serve distinct functions, as in “Time flies like an arrow” versus “Fruit flies like a banana.” Likewise, in “One-eyed one-horned flying purple people eater,” syntax admits multiple interpretations, each hinging on how phrases are grouped. Such cases remind us that meaning rests on structure, and structure is best revealed through trees.

From simple rules and diagrams, linguists build grammars—systems that describe lawful combinations of words. These grammars are powerful but never complete, for language admits endless refinement. Still, the branching tree remains one of the clearest mirrors of syntax: a map of how thought takes shape in speech, where the order of words becomes the order of meaning.

The Science of Meaning: An Inquiry into Semantics

To inquire into meaning, one must first attend to definition: the precise account of how a word is used. Dictionaries reveal networks of relations among words—synonyms such as happy, glad, joyful; antonyms such as inside and outside; hierarchies such as red (a kind of color) or rabbit (a kind of animal). These are known as hyponyms and hypernyms, for a term may at once belong to a larger class and itself encompass narrower kinds.

Yet languages divide meaning differently. English has but one know, while Polish distinguishes between wiem (knowledge of fact) and znam (acquaintance). Portuguese fazer combines both “to do” and “to make.” Thus, translation requires more than replacing words: it must reckon with the conceptual boundaries each tongue draws.

Meaning, however, is not fixed. Words broaden (thing once denoted an assembly, now any object), narrow (girl once meant any child, now only female), or shift entirely (nice once “ignorant,” now “pleasant”). Taboo hastens change: terms for bodily functions are softened by euphemism, only to acquire the same force and be replaced again.

Even stable words admit ambiguity. A single form may hold several senses—bank as river’s edge or financial house (polysemy). Others resist precise definition: a sandwich may or may not include rolls, wraps, or pizzas. Attempts at rigid categories expose uncertainty.

To address this, Eleanor Rosch proposed prototype theory: categories are structured around central examples, with less typical members at the margins. A robin is a prototypical bird; an emu, though flightless, remains a bird. A chair with four legs is central; a stool, though lacking a back, still belongs. This view allows flexibility where strict definitions fail.

Yet not all words fit prototypes. Content words—nouns, verbs, adjectives—lend themselves to such models. Function words—articles, prepositions, auxiliaries—do not. Their meaning arises only from grammatical role. To analyze them, scholars turn to formal semantics and predicate calculus, where the meaning of a sentence is equated with the conditions under which it is true.

Here, quantifiers such as all and a reveal hidden ambiguities. “All hosts like a rabbit” may mean there is one rabbit beloved by all, or that each host has a different rabbit. Symbolic logic disentangles such structures, making explicit what ordinary language obscures.

Other methods complement these approaches. Binary feature analysis classifies terms through oppositions, as in kinship. Natural Semantic Metalanguage seeks irreducible primitives of meaning. Cognitive semantics traces how metaphor structures thought, allowing abstract notions (such as time) to be conceived through spatial or physical imagery. Each method illuminates aspects of meaning, none alone suffices.

Thus, semantics is not a single science but a constellation of approaches—lexical, logical, cognitive, and cultural—each revealing part of language’s subtle architecture. Words shift, categories blur, meanings diverge across tongues; yet through these inquiries, we approach an understanding of how language binds thought to expression.

On the Use and Context of Language: An Exploration of Pragmatics

In human discourse, words seldom suffice on their own; understanding is completed by judgment, expectation, and context. To account for this, scholars have observed four guiding principles of communication: speech is expected to be truthful in quality, sufficient in quantity, pertinent in relevance, and clear in manner.

First, there is the presumption of truth. When one remarks, “Great job, Sherlock,” the phrase may convey praise or, conversely, irony. The hearer recognizes the intent not by the words alone but by the assumption that speech ordinarily communicates genuine knowledge. When words conflict with circumstance, another meaning—sarcasm, jest, or criticism—is inferred.

Second, speech must be proportionate. Too little or too much information violates expectation. To see a great flock of ducks and announce, “There are at least ten,” is true, yet oddly inadequate. Such departures from what the occasion demands may amuse, perplex, or mislead.

Third, speech is assumed to be relevant. If a label upon gum declares “sugar-free,” the notice is meaningful, for gum is usually sweetened. But if olive oil bore the same claim, one might mistakenly infer that oils commonly contain sugar. Relevance, or the lack thereof, directs interpretation.

Fourth, clarity is expected. When asked whether a course is worthwhile, an answer such as, “It is a course,” conceals rather than conveys judgment. Likewise, when obvious details are needlessly multiplied, suspicion arises. Indirectness often signals caution, diplomacy, or reluctance.

These four expectations—truth, sufficiency, relevance, and clarity—are encompassed by Paul Grice’s Cooperative Principle. According to this principle, conversation proceeds on the assumption that speakers cooperate in meaning-making. When words deviate from their literal sense, listeners supply the missing link through inference. Thus, if one replies, “It is raining,” to the proposal of a picnic, the refusal is understood though never spoken. This surplus of meaning, drawn from implication rather than words alone, is called implicature.

Politeness, too, is shaped by such mechanisms. In Malay, the particle lah softens a command; in Mandarin, repetition renders an imperative more courteous. French distinguishes tu from vous to mark intimacy or formality. Often, indirectness itself serves courtesy: “It is cold,” suggests that a window be shut, sparing the force of command.

The rhythm of speech likewise varies across cultures. In some communities, conversation overlaps, voices flowing together; in others, pauses mark each turn. Japanese and Tzeltal speakers often favor overlap, while Lao and Danish speakers prefer silence between turns. Even within a single language, such as English, New Yorkers tend toward rapid exchange, while Californians leave greater intervals. Though measured in milliseconds, such patterns are felt keenly as the pulse of conversation.

Thus, meaning resides not in words alone but in their use—completed by inference, softened by courtesy, and shaped by cultural rhythm. Language is not a fixed code but a living practice, wherein men and women, by shared expectations, make sense of what is spoken and of what remains unsaid.

The Relation Between Language and Society: An Examination of Sociolinguistics

Language is shaped by human company. We learn to speak not in isolation but in resemblance to those around us. This resemblance extends beyond sounds and accents to vocabulary, grammar, and style. In earlier centuries, before printing and mass literacy, place most strongly determined speech. Those bound to a region spoke as their neighbors spoke, and thus arose the first studies of linguistic variation—dialectology—concerned with how language changes across geography.

The earliest dialectologists, armed only with paper, pen, and attentive ear, traveled from village to village recording speech. Later, wax cylinders, magnetic tapes, telephones, and eventually the internet expanded their reach. Patterns soon became clear: languages long rooted in one place often splinter into numerous varieties, as in the villages of Switzerland or the highlands of Papua New Guinea. By contrast, languages carried outward through conquest or trade—Arabic, Chinese, English, French, Spanish—show less local variation, differing instead across great distances.

Variation, however, is not only geographic. Within a single city or nation, speech reflects social position, education, age, gender, ethnicity, and occupation. The scholar and the laborer, the elder and the youth, the wealthy and the poor—all adapt language to their social worlds. Even signed languages show this diversity: French Sign Language resembles American Sign Language more than British Sign Language, while in Ireland men and women once learned separately, producing distinct gendered dialects. In the United States, racial divisions once shaped signing as well, with Black and White communities developing different varieties.

Language also shifts with circumstance, a phenomenon known as code-switching. One may speak in formal tones before authority yet revert to colloquial speech among friends. Such shifts are strategic, for language carries consequence. Studies have shown that African American English, for instance, can provoke discrimination in housing and employment. Its rhythms and expressions may be admired when imitated by outsiders, yet stigmatized when used by its own speakers—a reminder that prejudice, not linguistic worth, dictates such judgments.

For every variety of speech is governed by rules, shaped by history, and suited to the needs of its speakers. What some deem refined and others coarse reflects not inherent linguistic quality but social esteem. To study language rightly is to look past prejudice, recognizing in each dialect the elegance of its structure and the ingenuity of its form.

On the Articulation and Properties of Consonantal Sounds

Human speech differs from the inarticulate sounds of nature—coughs, sneezes, or cries—in that it is shaped and ordered to convey thought. From a finite set of sounds arises the vast variety of words, made possible by the way breath, passing from the lungs through the vocal folds, is molded within the vocal tract. This tract, like a musical instrument, alters tone and quality through the movement of the tongue, lips, and other articulators. In spoken languages these are organs of the mouth; in signed languages they are the hands, face, and body, where properties such as handshape, palm orientation, movement, position, and expression form the basis of meaning. Though systems exist to record these gestures, no universal writing system has yet been adopted.

In spoken language, the manner of articulation is central. Consonants occur when the vocal tract is narrowed or obstructed, while vowels arise when it remains open but shaped. The simplest consonants are the stops (/p/, /t/, /k/), produced by briefly blocking and releasing airflow. Each is defined by its place of articulation: /p/ at the lips (bilabial), /t/ at the alveolar ridge, and /k/ at the velum. Other consonants, the fricatives, involve partial obstruction, forcing air through a narrow gap, as in /f/ or /s/. These too are distinguished by voicing: /s/ is voiceless, while /z/ is voiced. Such pairs—/f/ and /v/, /p/ and /b/, /t/ and /d/—are found throughout the world’s languages.

Another class, the nasals, directs air through the nose: /m/, /n/, and /ŋ/ (as in sing). These align with their oral counterparts by place of articulation. Other consonants, such as /w/, involve double articulation, using two points in the vocal tract at once. Still more unusual are consonants produced without air from the lungs: implosives, ejectives, and clicks—the latter used systematically in languages like Zulu and Xhosa.

To classify these sounds, phoneticians devised the International Phonetic Alphabet (IPA) in the late nineteenth century. Each distinct sound is given a single symbol, mostly adapted from Latin and Greek letters, ensuring clarity and consistency. Unlike vocabulary, which grows without limit, the IPA is bounded by the finite possibilities of the human vocal tract. Its chart arranges sounds by place and manner of articulation, so that even an unfamiliar symbol reveals its properties—whether voiced or voiceless, labial or velar, plosive or fricative. Blank spaces mark unattested sounds; shaded areas indicate sounds impossible for human speech.

Before sound recording, the IPA was essential for linguists, singers, and teachers of speech. Even now, in an age of digital recording and machine learning, it remains indispensable for studying languages, teaching pronunciation, and training computers to recognize and reproduce human speech. With consonants thus examined, attention turns next to their counterparts: the vowels.

On the Articulation and Properties of Vowel Sounds

Vowels are produced with an open vocal tract, the air passing freely without obstruction. Their quality depends not on closures of the mouth, but on the position and movement of the tongue, the openness of the jaw, and, in many cases, the rounding of the lips. English, though written with five vowel letters (sometimes six with y), possesses far more vowel sounds—between twelve and twenty-one depending on the dialect. A single sentence may reveal this diversity: Who would know aught of art must learn, act, and then take his ease. Its vowels, though represented by few letters, vary widely in pronunciation, demonstrating the inadequacy of ordinary spelling and the necessity of the International Phonetic Alphabet (IPA) for accurate description.

The organization of vowels is best understood through the concept of vowel space, which maps them according to three features:

Height (high/close, mid, or low/open), determined by how near the tongue is to the roof of the mouth.

Frontness or backness, determined by how far forward or back the tongue is positioned.

Lip rounding, which adds an additional distinction.

Thus, [i] is a high front unrounded vowel, as in machine, while [æ] is a low front unrounded vowel, as in cat. Moving back in the mouth, [ɑ] (as in father) contrasts with [u] (as in goose), the latter marked by both height and lip rounding. Exceptions also exist: [y], found in French and German, is a high front rounded vowel, combining properties usually separated.

At the center of vowel space lies schwa [ə], neither high nor low, front nor back. It is the most common vowel in English, typically found in unstressed syllables (sofa, about).

In addition to these simple vowels, languages employ diphthongs—sounds that glide from one vowel position to another. English examples include [aɪ] (time), [aʊ] (house), and [ɔɪ] (choice). Such sounds demonstrate that vowels are not always static, but may shift dynamically during articulation.

The vowel space is conventionally depicted as a trapezoid, reflecting the natural limits of tongue and jaw movement. Languages vary widely in how much of this space they exploit. Some, such as those of Southeast Asia and equatorial Africa, possess large vowel inventories, while others, like Arabic or certain Australian languages, make do with far fewer.

The IPA chart represents these distinctions with precision. Beyond basic vowels, diacritics extend its range, marking length, nasalization, or tonal pitch—features crucial in languages such as Japanese, French, and Arabic, and essential in tonal languages like Mandarin, where vowel pitch alone alters meaning.

Through such systematic mapping, vowels can be studied not only as isolated sounds, but also in their patterns, contrasts, and transformations. This prepares the way for phonology, which considers their role within the living flow of speech.

The Study of Phonemes and Their Organization in Language

Phonology, the study of how sounds function within language, requires a shift in perception. Much like an optical illusion, its patterns are not immediately evident. Infants can discern a wide range of sound contrasts, yet as they grow, their perception narrows, aligning with the phonological boundaries of their native tongue.

At the core of this field lies the distinction between phones and phonemes. A phone is any speech sound in its raw, physical form, while a phoneme is a sound recognized as carrying meaning within a given language. For example, in English, the difference between /t/ and /d/ distinguishes words such as rabbit and rabid. Conversely, English treats aspirated [tʰ] (as in team) and unaspirated [t] (as in steam) as mere variations of the same phoneme. In Nepali, however, this same contrast separates words entirely—tal (“lake”) and tʰal (“plate”)—making the sounds distinct phonemes.

Such variations, when they do not alter meaning within a language, are termed allophones. They reveal how each language draws its own map of which differences matter and which do not.

Importantly, phonology is not confined to spoken languages. Signed languages exhibit parallel structures, with distinctions marked by handshape, orientation, and movement. Processes familiar to spoken language—assimilation, dissimilation, and other adjustments—also shape the phonological systems of sign.

Phonological processes illustrate how languages evolve for ease of articulation and clarity. Assimilation reduces complexity, as in the common pronunciation of handbag as [ˈhambag]. Other processes—such as deletion, insertion, and metathesis—likewise reshape words in fluent speech. Contractions like I’ve or can’t exemplify the same drive toward efficiency.

Though often subtle, these processes are central to the life of language. The study of phonology not only clarifies how sounds and signs are organized, but also deepens our understanding of human communication. By tracing these patterns, we uncover the hidden structure of speech, an insight that enriches both linguistic science and practical expression.

On the Interrelation Between Language and the Mind: An Inquiry into Psycholinguistics

The systematic study of language and the brain began in the 19th century, when physicians observed the effects of brain damage on speech. This research revealed two distinct forms of aphasia. Broca’s aphasia, caused by injury near the left temple, impairs fluent speech or signing while sparing comprehension, reflecting the role of this region in morphosyntax. Wernicke’s aphasia, linked to a region above the left ear, preserves fluency and grammar but undermines meaning, producing nonsensical speech.

Subsequent discoveries, however, showed that these findings were not absolute. Some individuals with damage to Broca’s area did not develop aphasia, and others relearned speech through singing, a function supported by different regions. These cases highlighted the principle of neuroplasticity, the brain’s capacity to reorganize and adapt. Likewise, while language is usually lateralized in the left hemisphere, in left-handed or ambidextrous individuals it may reside in the right hemisphere or be distributed across both.

Psycholinguistics also investigates language errors, which provide a window into cognitive processes. “Tip of the tongue” states—when a word is almost, but not fully, retrievable—suggest that words are stored not as whole units but in interconnected fragments. In signed languages, the equivalent “tip of the fingers” phenomenon involves recalling partial features of a sign, such as handshape or location, while failing to access the full form. Such slips, though fleeting, reveal how knowledge is organized in the mind.

Beyond error, perception itself shows traces of meaning. The well-known Kiki-Bouba experiment demonstrates a natural mapping between sound and shape: angular figures are typically labeled “Kiki,” while rounded ones are “Bouba.” Though not universal, such associations suggest that language is influenced by sensory experience, rather than being entirely arbitrary.

Experimental methods further illuminate the relationship between language and cognition. Priming studies reveal connections between words by measuring reaction speed—subjects respond faster to “cat” after seeing “dog” than after an unrelated word. Gating experiments test how much of a word is needed for recognition, showing that speech is continuous rather than segmented as orthography implies. Other studies have uncovered striking links between language and physiology: swearing, for instance, has been shown to increase pain tolerance during stressful tasks.

Modern technologies provide additional insight. Eye-tracking demonstrates how readers interpret sentences incrementally, revising meaning when faced with garden-path structures. Electroencephalography (EEG) captures the brain’s immediate responses to anomalies, such as the N400 signal triggered by semantic incongruities. Functional magnetic resonance imaging (fMRI), in contrast, excels at localizing activity by detecting blood flow, though with less temporal precision and greater expense. Each method carries strengths and trade-offs, offering complementary perspectives on language processing.

Despite these advances, caution remains essential. Language organization in the brain varies across individuals, and much existing research has focused narrowly on monolingual English speakers. The effects of multilingualism, cultural differences, and broader diversity remain insufficiently explored.

The Processes of Language Learning and Their Development

Language acquisition is a complex, multifaceted endeavor, encompassing phonology, morphology, syntax, semantics, and other faculties of speech. Scholars debate whether children acquire language by processes fundamentally distinct from adult learners or whether the mechanisms are essentially similar, differing only in efficiency and flexibility.

Language perception begins in utero. By the thirtieth week of gestation, the fetus responds to sound, though faintly. At birth, infants preferentially attend to speech, particularly the voices of caregivers, and the patterns of the languages they hear. Similarly, infants exposed to sign language observe and respond to visual gestures with heightened attention.

Because infants cannot speak, researchers employ indirect methods to study their perception. High-amplitude sucking measures the infant’s response to novelty: a change from a familiar syllable, such as ba, to a new one, pa, provokes increased sucking, revealing the capacity to discriminate sounds. Initially, infants can perceive all phonemes used in human speech, but by the first year, they become attuned to the phonemes of their native language(s).

As control over their articulators develops, infants begin babbling, producing syllables without meaning. Children exposed to sign language produce analogous hand movements. Speech addressed to infants, known as child-directed speech, varies across cultures, yet acquisition proceeds with remarkable uniformity. Most children utter their first words by around one year, often simple syllables like mama or dada, with meaning emerging as caregivers interpret these vocalizations.

Language soon integrates with gesture, as in cookie accompanied by reaching, before progressing to rudimentary syntax in the two-word stage, e.g., want cookie. Beyond imitation, children abstract rules, as demonstrated by Jean Berko Gleason’s wug test: when shown a novel creature, a child applies the plural rule, producing wugs. Such evidence indicates that language learning involves pattern recognition and generalization, not mere mimicry.

Errors, too, are informative. A child may initially say went, then goed, reflecting the application of a general past-tense rule before learning exceptions. These developmental stages suggest a critical period, during which early exposure is crucial for normal language acquisition. Deprivation of accessible language in childhood, as seen in cases of isolated or unschooled children, leads to permanent deficits. Conversely, children exposed to sign language from infancy acquire it naturally, akin to spoken language.

Children are inherently adept at acquiring multiple languages. Bilingual or multilingual acquisition occurs effortlessly when languages are introduced early, whereas older learners face constraints. Prior knowledge may scaffold new learning, but established grammatical patterns can interfere—a phenomenon known as language transfer. Motivation, interest, and meaningful engagement significantly enhance adult acquisition.

Multilingualism manifests in varied forms. Domain separation allows different languages to serve distinct social or familial functions. Receptive bilingualism enables comprehension without fluent production, while code-switching blends languages fluidly in discourse. Heritage languages may be fully retained, partially preserved, or lost over generations, depending on social context and transmission. Research confirms that raising children bilingually incurs no cognitive harm and often confers advantages.

The Evolution of Language Through Time

Language is never fixed; it changes across all levels—sounds, words, and grammar. Old English, for instance, shed many of its suffixes by the twelfth century, reshaping its structure. Between 1400 and 1600, the Great Vowel Shift transformed English pronunciation. Yet languages do not change in every respect at once; their transformations are gradual, layered, and selective.

Change often arises through misperception or reanalysis. The word apron began as napron, but was reshaped by the misdivision of a napron into an apron. Similarly, necessity drives innovation. Old English pronouns (hē, hēo, hit, hīe) were confusingly similar; Middle English clarified them by introducing she, shortening hit to it, and adopting they from Old Norse. By the fourteenth century, they was used generically, a practice still alive today. Other changes, however, spread simply by custom, as in Spanish dialects where casa (house) and caza (hunt) are distinguished in some regions but merged in others.

Sometimes, entirely new languages emerge. In 1977, Nicaraguan deaf children, lacking a shared system, spontaneously created Nicaraguan Sign Language, which developed into a full language within a generation. This revealed the mind’s innate capacity to impose order and structure on fragments of communication.

More commonly, languages change through contact. Prolonged interaction may lead to convergence, as when Bantu languages in Zambia adopted click consonants from Khoisan neighbors. Elsewhere, divergence prevails, as in Vanuatu, where related languages evolved apart to remain distinct. When communication is necessary across groups without a shared tongue, pidgins arise as simplified systems. If children acquire a pidgin as their native language, it expands into a full grammar and vocabulary, becoming a creole—as with Haitian Creole, Tok Pisin in Papua New Guinea, and Kriol in Australia. Though born of inequality and colonization, creoles are independent languages, not corrupt forms of their sources, much as French is distinct from Latin.

Tracing language change relies on evidence. Modern scholars consult sound recordings, but for earlier stages they depend on writing. Long records, such as those of Tibetan or English, enable diachronic analysis (study of language through time), while synchronic analysis examines languages as they exist at a given moment. Systematic comparisons reveal kinship: English father, Dutch vader, German Vater, and Icelandic faðir share a common ancestor. Such related forms are cognates, pointing to shared descent.

Through the comparative method, scholars reconstruct ancestral languages. From cognate sets they infer forms such as Proto-Germanic *fadēr, ancestral to all Germanic words for “father.” This in turn relates to Latin pater and Sanskrit pitr’, showing that Proto-Germanic was itself a branch of Proto-Indo-European (PIE), spoken about six thousand years ago. PIE left no writing, but systematic sound changes (e.g., Latin p → English f in pairs like pater / father, ped / foot) reveal its shape.

Beyond Indo-European, other proto-languages have been reconstructed: Proto-Semitic (ancestor of Arabic, Hebrew, Amharic), Proto-Algonquian (Cree, Ojibwe, Massachusett), Proto-Austronesian (Javanese, Tagalog, Malagasy), Proto-Pama-Nyungan (many Australian Aboriginal languages), and Proto-Bantu (Swahili, Zulu, Shona).

Yet not all languages fit into families. Isolates—such as Basque, Ainu, and perhaps Korean—have no known relatives, either because they truly arose alone or because their kin have vanished. Though mysteries remain, most of the world’s 7,000 languages can be traced into larger families, each preserving echoes of ancient speech.

Through such study, language change is revealed not merely as sound or grammar in motion, but as a record of human history itself—our migrations, contacts, and the enduring creativity of the human mind.

A Survey of the Diverse Tongues of the Earth

The line between language and dialect is blurred. A common measure is mutual intelligibility: if two speakers understand one another, their speech is often deemed a single language; if not, distinct tongues. Yet all speech changes through time and space. Spanish was once a form of Latin, though no moment marked its separation. In villages strung across a region, gradual shifts may yield a dialect continuum, where each community understands its neighbors, yet the extremes are mutually unintelligible. Geography deepens these divisions: mountains, seas, and distance shape tongues apart.

Migration also alters language. Communities abroad preserve older forms, while the homeland continues to change, so that over generations one language becomes two. Large populations evolve more quickly than small ones. Meanwhile, languages long overlooked—especially signed languages—have come to recognition. Some arise in urban deaf communities, like French and Nicaraguan Sign Language; others emerge in villages where deaf and hearing alike share a common system, such as Kata Kolok in Indonesia or Adamorobe in Ghana.

Yet the fate of languages is not determined by sound alone, but by politics and power. Hindi and Urdu are mutually intelligible in speech, yet divided by script and shaped toward different literary traditions. Conversely, the many languages of China, often mutually unintelligible, are officially called dialects, their unity maintained by a common script. States strengthen their authority by elevating the speech of capitals as “standard,” leaving rural tongues to wither. Some languages are uncounted by governments altogether, while others have been deliberately suppressed, as in colonial schools that forbade indigenous children their ancestral speech.

The recognition of a language depends not on linguistic criteria alone but on language ideologies—beliefs about the value of particular ways of speaking. Such judgments, bound to history and politics, shape which tongues are counted, preserved, and taught. Preservation itself requires labor and resources. Dictionaries, grammars, and modern technologies demand funding and patronage; thus, languages once recorded are more likely to be supported again, while others remain invisible.

This imbalance hastens decline. Languages without recognition or resources risk falling silent, as children are discouraged or prevented from learning them. Yet renewal is possible. Hebrew was revived in Israel, Wampanoag in New England; Maori and Hawaiian have regained vitality through immersion schools and community efforts. Recognition grants not only legal and educational support but dignity to speakers, affirming their right to inherit and transmit their traditions.

Thus the number of the world’s languages cannot be fixed, for speech is ever in motion, shaped by geography, migration, politics, and power. The truer question is not how many there are, but how they may be sustained. For the value of human language lies not in count alone, but in the lives it carries, the knowledge it preserves, and the justice it upholds.

On the Application of Machines to the Study of Language

The instruction of machines in human language is a task of many stages. First comes the conversion of speech, handwriting, or print into digital text, through means such as speech recognition or optical character recognition. This stage demands precision, for the system must distinguish words, sentence boundaries, and subtle ambiguities—whether one speaks of “a moist towelette” or “a moist owlet.”

Once text is obtained, the machine must attempt interpretation. Words with many senses—such as “bank,” denoting both river’s edge and financial house—must be resolved in context. Relations within a sentence must be parsed, for upon this approximate understanding all further operations depend. With such a foundation, machines may be directed toward useful ends: answering questions, translating between tongues, or guiding travelers. Their output, though grounded in code rather than human comprehension, must finally be rendered again into human form, whether as written text or synthesized speech.

This division of labor within natural language processing allows efficiency, for components may be reused across tasks. A system trained in speech recognition may serve dictation, translation, or inquiry alike. Certain processes, like speech recognition and synthesis, are general; others must be crafted anew for each language.

Yet not all tongues receive equal attention. Spoken languages have seen remarkable progress, while sign languages remain neglected. True recognition of signing would require analysis not only of handshapes but of movement, body posture, and facial expression. Moreover, signed languages are not mere renderings of speech but full languages in themselves, each distinct. Proposals such as “sign-to-speech gloves,” focused only on hand positions, fail to capture this reality and serve neither Deaf users nor true translation.

At this point arises the question: if machines process language, do they understand it? The answer is no. A beast trained to press levers labeled “I,” “want,” and “food” does not comprehend words, but merely associates patterns with reward. So too machines: their fluency is an effect of pattern recognition, not awareness.

Early efforts relied on rules painstakingly encoded—plurals formed by “s,” with exceptions like “children.” This proved unwieldy, giving way to data-driven methods in which machines infer patterns from vast corpora. Neural networks advanced the field by approximating aspects of human cognition, though their inner workings are opaque. At first they err absurdly—producing only “eeeeee” upon learning that “e” is common—yet refinement yields greater sophistication.

Training proceeds chiefly by two methods. In supervised learning, paired data is supplied: text with speech, questions with answers, sentences with translations. This yields strong results but requires costly human labor to prepare. In unsupervised learning, systems ingest unpaired data, seeking patterns without guidance. A compromise, semi-supervised learning, combines smaller sets of paired data with larger unpaired collections.

Yet all data is human-made, and human bias permeates it. Harini Suresh has outlined how such bias enters machine learning:

Historical bias: Existing inequalities are reproduced—for instance, Turkish pronouns translated into English as “he is a doctor” and “she is a nurse.”

Representation bias: Many languages and groups are underrepresented, leaving tools ill-suited for universal use.

Measurement bias: Training on sources like the Bible, distant from everyday speech, skews translations.

Aggregation bias: Varieties of a language treated as one disadvantage minority dialects, such as African American English.

Evaluation bias: Success metrics ill-suited to some languages distort system performance.

Deployment bias: Tools built for benign purposes may be misapplied, such as style analysis used to unmask informants.

Identifying these biases is the first step toward correction, and ethical reflection must accompany technical mastery. For language technology is not neutral: it shapes communication, culture, and power.

Yet even as machines advance, their “knowledge” remains unlike our own. They discern patterns, but they do not understand. And amid these artifices, we must not forget the oldest of all language technologies—the written word—to which our inquiry now turns.

The Systems and Forms of Written Language: A Discourse on Orthography

A writing system rests upon two foundations: visible symbols, or graphemes, and the meanings they bear. These symbols may represent sounds, syllables, or entire words.

When a grapheme corresponds to a phoneme, the system is called an alphabet. The Latin alphabet, origin of English, Finnish, Vietnamese, and Swahili, is one such system, as are the Cyrillic and Greek scripts. Yet alphabets are rarely pure: diacritical marks, letter combinations, silent remnants, and borrowings complicate their use, as in English with th, sh, or the silent k in knee.

By contrast, an abjad records mainly consonants, leaving vowels to be inferred or optionally marked. This suits Semitic languages such as Arabic and Hebrew, whose roots depend chiefly upon consonantal patterns.

A syllabary grants each symbol to a syllable rather than a single sound. This form appears in Nāgarī scripts of India and in Inuktitut, where the number of syllables is limited.

More intricate still is logography, where each character represents a word or morpheme. Chinese script exemplifies this, though few systems remain wholly logographic. Japanese, for instance, blends Chinese characters (kanji) with syllabaries (kana).

The nature of a language often shapes its script: abjads for consonant-root systems, syllabaries for languages with few syllables, alphabets for efficient sound-symbol mapping, and logographies for broad semantic economy across dialects.

Material constraints also guided form. Stone carving favored angular letters; brushwork on silk encouraged fluid strokes. Even the Inca employed the quipu of knotted cords, though its full meaning remains uncertain.

True writing—capable of conveying complete meaning—arose independently only three times: in Mesopotamia (cuneiform, ca. 2500 BCE), in China (oracle bone script, ca. 1500 BCE), and in Mesoamerica (Olmec and later Mayan glyphs, ca. 1000 BCE). From these sources, most later scripts descend or draw inspiration.

Letters themselves evolved across cultures. The Phoenician bēt (“house”) became our modern B. Others transformed more radically: wāw (“hook”) gave rise to five different Latin letters—F, U, V, W, and Y. Tools of writing also determined survival: Old English letters like thorn (þ) and eth (ð) vanished with the advent of Continental printing presses, replaced by th or, misleadingly, y as in “Ye Olde.”

At times, entirely new scripts were devised. Sequoyah created the Cherokee syllabary in the 19th century, enabling native literacy. In Korea, King Sejong (1443) introduced Hangul, whose symbols mirror the shape of the mouth in articulation—an ingenious and scientific design.

Reform has also shaped orthography. Atatürk replaced Ottoman Arabic script with Latin for Turkish (1928). Noah Webster simplified American spelling (color, center) to distinguish it from British forms. Standardized spelling itself emerged only with the printing press; before then, variation abounded—even Shakespeare wrote his name six different ways.

Today, the digital age has loosened these standards. Online writing bypasses editorial control, embracing abbreviations, symbols, and emojis to convey nuance. Meanwhile, sign languages, once unwritten, now flourish through video.

Thus, the history of writing is one of invention, adaptation, and transformation. From clay tablets to digital screens, from carved letters to living scripts, orthography remains a mirror of language itself—ever shifting, yet enduring as humanity’s most powerful tool of memory and communication.

End