Some Simplifications On Literature, Philosophy, and Psychology

some notes (old) reorganized and rewritten on those subjects for the sake of facilitating there comprehensibility

A Condensed Philosophy of Literature

Human beings are distinguished not merely by reason or instinct, but by the unique capacity to create and live through stories. If our biology is the hardware of an animal, storytelling is the software that defines our humanity—an evolving program of myths, emotions, and meaning. While our bodies remain largely unchanged, our collective narrative constantly shifts, shaping identities, cultures, and civilizations.

From our earliest moments, stories have given coherence to a chaotic world. They offer meaning where the universe gives none, filling the void with myth, memory, and metaphor. More than data or fact, the human mind retains and responds to stories because they mirror emotional truth and social bonds. As Camus argued, in a silent universe, we invent meaning—and stories become the most powerful response to absurdity.

Emotion, not reason, drives this impulse. While reason must be taught, emotion is innate—binding individuals into communities, urging us to sacrifice for ideals, and inspiring acts that logic alone cannot explain. Stories, rich with emotional weight, transmit cultural memory, morality, and purpose. They bind groups beyond kinship and form the foundations of religions, nations, and empires. At scale, they hold humanity together.

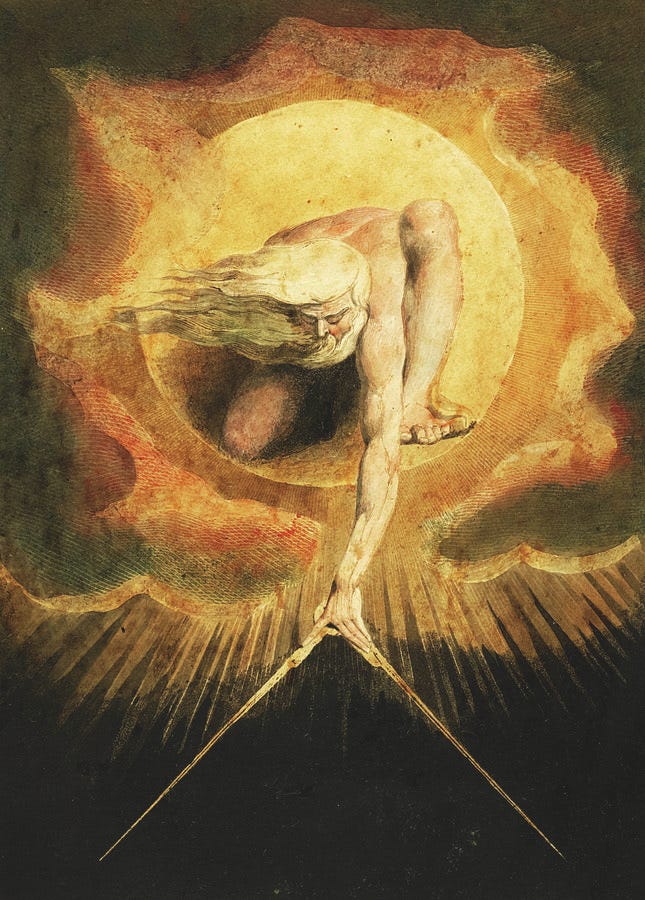

Carl Jung saw in story the architecture of the psyche itself—archetypes shared across time and culture, embedded in the collective unconscious. Literature, then, is not just cultural ornament, but the DNA of human consciousness. It carries the dreams, fears, and patterns of human life across generations.

Storytelling predates philosophy, offering concrete experience where philosophy offers abstraction. Literature grounds the question of existence in narrative—rooted in time, place, character, and conflict. Philosophy asks "why"; literature asks "how." Where philosophy persuades by reason, literature persuades by empathy. The greatest thinkers—from Plato to Dostoevsky—often used fiction to express truths too complex for logic alone.

Indeed, storytelling is ancient. Around campfires and cave walls, early humans shared tales of hunt and survival, failure and triumph. These stories encoded lessons vital for survival and social cohesion. Evolution shaped our love for narrative just as surely as it shaped our appetite for food or sex. Stories taught us how to live, reproduce, and belong.

Even today, stories remain our most potent tool for connection. Whether shared over coffee or through novels, they forge empathy and understanding. In storytelling, both listener and teller are transformed: listeners gain insight, and speakers find clarity. As Italo Calvino observed, stories polished by repetition become as refined and enduring as river stones.

The history of literature traces humanity’s shifting concerns. The earliest epics, like Gilgamesh and the Iliad, confronted mortality and the divine. Later, literature explored love, reason, identity, and empire. The Romantic and realist movements reflected the tensions between nature and society, emotion and fact. The 20th century, shaped by psychology and postmodern doubt, turned literature inward and fragmented. Today, literature must face the challenge of artificial intelligence—machines that can mimic narrative but not meaning.

Throughout, the tension between style and substance persists. Some argue that meaning transcends form; others that how a story is told is itself essential. In truth, both are inseparable. Form gives flesh to meaning; without it, stories are hollow.

History and literature both narrate human experience, but they differ in purpose. History is factual and sociological, often told by the victors. Literature is emotional and psychological, giving voice to the outsider, the dreamer, and the displaced. Where history records what happened, literature reveals what it felt like to live through it. It speaks not to the mind alone, but to the heart and soul.

History speaks in broad strokes; literature whispers the intimate. Where history chronicles civilizations, literature elevates the individual—especially the outsider—offering voice to the forgotten and forging a legacy of human feeling rather than conquest.

Language emerged as the earliest thread of human consciousness. From primal utterances around firelight came stories—oral traditions smoothed by centuries of repetition. With the advent of writing, these tales were etched into permanence: on clay tablets, papyrus scrolls, and later into the vast archive of the digital age. From The Epic of Gilgamesh to today’s hyperlinked world, literature has endured by adapting its form while preserving its core: the search for meaning.

Gutenberg’s printing press democratized the written word, catalyzing centuries of discovery and revolution. The internet, in turn, exploded access to literature, though at the cost of overwhelming abundance. Only stories of deep resonance survive this flood.

Storytelling, as Aristotle observed, follows a natural arc—beginning, middle, end—mirroring life itself. Rooted in mortality, stories offer a response to death, crafting continuity where none is promised. Religion, ideology, and identity all draw power from narrative. Myths—whether divine or political—bind societies through a shared sense of purpose.

Ideologies, too, rise on stories. Socialism, fascism, nationalism: each spins a compelling narrative to unite believers, just as ancient religions once did. Without story, belief collapses; without belief, cohesion falters.

Unlike identity, which is static, story is dynamic—a vector aimed toward the future. This is mirrored in human relationships, where women, attuned to biological consequence, often seek the “direction” of a bond. Their stories ask: where is this going? Men, by contrast, often remain rooted in the immediacy of the act. In such differences, storytelling reveals its evolutionary origins and emotional depth.

Narratives shape values. A model dresses impeccably not from vanity but to fulfill a story of beauty; a miner risks his life for a future rooted in love or family. Mothers endure pain out of devotion to the tale they embody—the story of sacrifice and continuation. In each case, meaning is carved from effort through narrative.

Ultimately, stories serve as torches in the dark. As fire made food digestible, stories make existence bearable. They frame death not as finality, but as a moment to be understood, transcended, or transformed.

The Epic of Gilgamesh, our earliest surviving literary work, dramatizes this quest. Gilgamesh’s grief over Enkidu propels him to seek immortality, only to discover that death is inescapable. Yet in returning to Uruk, he recognizes that legacy—what we build, create, and share—grants a form of endurance. Mortality is not conquered, but redeemed through memory.

Centuries later, Homer’s Iliad accepts mortality without resistance. Achilles and Hector face death with full knowledge of their fate, pursuing glory despite its cost. Here, death is the necessary frame for meaning; to live heroically is to accept its inevitability.

In contrast, the Daoist Zhuangzi sees life and death not as opposites, but as aspects of a single flow. Its most famous passage—the “Butterfly Dream”—dissolves the boundary between dream and waking, self and other. Death is not an ending, but a transformation, part of the cosmic rhythm.

This triad—Gilgamesh striving against death, Homeric heroes accepting it, and Zhuangzi embracing its continuity—represents a spectrum of human responses to finitude.

In One Thousand and One Nights, storytelling becomes a literal act of survival. Scheherazade postpones death by weaving tales that captivate her executioner. Her nightly stories are not just entertainment; they are lifelines, demonstrating that narrative can suspend even fate.

This theme echoes through letters from prisoners, diaries of exiles, and testimonies of the oppressed. Storytelling endures where nothing else does. It gives shape to pain, offers meaning to suffering, and affirms life in the face of death.

In every culture and age, literature stands as humanity’s most enduring response to its most ancient question: How shall we live, knowing we must die?

Dante’s Divine Comedy presents the afterlife as a moral ascent, from Hell’s punishments to the purgation of sin and the final vision of divine truth. Only the Christian faithful may enter Paradise, while even the noble Virgil, a symbol of human reason, is barred for having lived before Christ. Like the Egyptian Book of the Dead or the Tibetan Book of the Dead, Dante’s work transforms death into a journey where one’s soul confronts its virtues and vices—a narrative structure through which mortality becomes a path to transcendence.

This theme recurs in The Cloud Dream of the Nine, a 17th-century Korean novel set in Tang China. A monk’s moment of temptation leads to his rebirth as Yank, a successful official surrounded by pleasure, power, and wives. Yet at the peak of his fortune, he realizes the hollowness of desire. His return to monastic life affirms the impermanence of worldly attachments and the deeper fulfillment of spiritual simplicity.

Marcel Proust, writing in 20th-century France, explores not death, but time, as life’s great adversary. In In Search of Lost Time, he shows how sensory memory—a taste, a scent—can briefly dissolve time’s grip, returning us to earlier selves. Yet for Proust, true victory over time lies in art. A well-crafted work endures, allowing its creator to live on in the minds of future generations.

Across cultures, storytelling itself becomes a vehicle for immortality. Religions encode this impulse, promising eternal life through belief, while fiction allows authors to leave lasting impressions beyond death. As religious authority wanes, hedonism and self-expression rise to prominence—but the desire to endure, to outlive death in memory or art, remains constant.

The Epic of Gilgamesh probes this longing directly. Gilgamesh seeks eternal life but finds that it leads only to loneliness. The immortal Utnapishtim lives in isolation, cut off from the rhythms of mortal existence. Immortality becomes not a gift, but a curse—a theme echoed in Camus’ Sisyphus, condemned to an eternal, meaningless task.

Humanity has always struggled with mortality. From ancient embalming to modern cosmetic surgery, we resist decay. Yet the most lasting form of preservation is not physical—it is narrative. Figures like Gilgamesh live on not through their bodies, but through the stories they left behind. As existential thinkers from Kierkegaard to Camus recognized, literature uniquely bridges the gap between death and meaning.

But mortality is only one half of the human condition. The other is conflict. Darwin’s theory of natural selection reveals that life is struggle—not only against nature, but against other humans. Literature has long chronicled this reality, often framing war within mythic narratives that unify peoples under banners of good and evil. Shared stories forge collective identities and justify collective actions.

The Mahabharata, one of India’s oldest epics, centers on a dynastic war over the throne of Hastinapura. Its spiritual heart, the Bhagavad Gita, stages a dialogue between the warrior Arjuna and the god Krishna. Torn between duty and morality, Arjuna hesitates to fight. But Krishna urges him to transcend personal feeling and fulfill his dharma—the duty to protect his people. The war becomes a moral crucible in which Arjuna must learn that righteousness sometimes requires violence.

Homer’s Iliad, composed in ancient Greece, also centers on war, though its motivations differ. Here, conflict arises not from duty, but from desire—specifically, the abduction of Helen. The tale reveals how primal drives, such as revenge and sexual competition, can ignite vast destruction. Achilles, the Greek hero, only rejoins the battle after the death of his beloved friend, underscoring how emotion, not strategy, drives action.

In both epics, gods intervene in mortal affairs, reflecting the belief that war and fate are intertwined. But while the Mahabharata frames war as a sacred duty, the Iliad sees it as the product of human passion and divine meddling. Helen’s beauty and Achilles’ rage become the sparks for prolonged suffering, mirroring history’s many wars fought over honor, love, or vengeance.

These stories reveal that survival, legacy, and desire are the engines of human history. Whether through heroic deeds, spiritual transcendence, or artistic creation, humanity continually seeks to outlast its finitude. In this endeavor, storytelling remains our most enduring act—a torch passed across generations, illuminating the path through mortality, meaning, and memory.

While early epics often celebrated empire-building, Ferdowsi’s Shahnameh mourns the fall of Persian civilization. Composed in the 10th century and comprising nearly 50,000 couplets, this monumental work spans mythical origins to the 7th-century Arab conquest, dividing history into mythical, heroic, and historical epochs. Central to its heroic age is Rostam, a mighty but flawed champion whose tragic slaying of his own son, Sohrab, captures the human cost of war and fate. The Shahnameh chronicles not only battles but the cyclical rise and fall of kings, empires, and moral ideals, presenting evil and virtue in both enemy and hero alike. In doing so, Ferdowsi preserves not just Persian history, but its language and cultural soul.

In Northern Europe, the Anglo-Scandinavian epic Beowulf tells of a lone hero confronting monstrous foes—Grendel, his vengeful mother, and a dragon—reflecting humanity’s ancient struggle with nature and death. Though set in pagan Scandinavia, the story was preserved by Christian scribes, blending mythic archetypes with evolving religious worldviews. The Icelandic sagas, such as the 13th-century Njal’s Saga, shift from monsters to human feuds, recording real conflicts in prose form. These works valorize both physical strength and legal cunning, echoing cultural ideals of honor and vengeance in a harsh landscape.

Across the world in China, Luo Guanzhong’s 14th-century Romance of the Three Kingdoms dramatizes the collapse of the Han dynasty and the ensuing civil wars. Blending fact and fiction, it explores loyalty, strategy, and shifting alliances amid political chaos. Though rooted in warfare, the epic ultimately affirms that peace and order are the natural human aim, though they must be achieved through conflict. This enduring narrative tradition shaped political thinking in East Asia for centuries.

These global epics share a common insight: storytelling is born from mortality and shaped by conflict. Early epics like the Mahabharata and Iliad defend the survival of empires; later works like the Shahnameh and Three Kingdoms lament their decline. All look backward, preserving lost worlds in memory.

Conflict remains the engine of narrative, but another primal force—the desire for love—also defines literature. Romance, born from the Latin term for popular tales in the Roman world, evolved into stories about passion, seduction, jealousy, and betrayal. While conflict provides the structural core of stories, romance decorates them with emotional richness. The sexual instinct, second only to hunger, drives countless narratives, shaping the themes of courtship, heartbreak, and even violence.

In sum, epic literature reflects humanity’s core instincts: the struggle for survival, the desire for love, and the awareness of death. Across cultures, these impulses have forged enduring stories that preserve civilizations long after their fall.

Before European chivalric tales took shape, Japan produced what is widely considered the world’s first novel: The Tale of Genji by Murasaki Shikibu, written in the early 11th century. Set in the Heian court, it explores the romantic and psychological life of Prince Genji, whose pursuit of idealized love reveals deep emotional vulnerability and existential longing. The novel’s introspective tone, feminine perspective, and complex characterization mark it as a profound meditation on love, loss, and the impermanence of beauty.

In Persia, Nizami Ganjavi’s Leyli o Majnun (1192) stands as a pinnacle of romantic poetry. Drawing on older Persian works like Gorgani’s Vis and Ramin, Nizami infuses the story with spiritual depth, portraying love as a transcendent force. Majnun’s madness and isolation reflect the impossibility of reconciling idealized desire with social constraints, elevating the tale into a Sufi allegory of longing and renunciation.

These Eastern narratives would echo in European medieval literature, where the chivalric romance emerged. Geoffrey of Monmouth’s Historia Regum Britanniae (1138) introduced the Arthurian tradition, leading to works like Tristan and Iseult and Chrétien de Troyes’ Lancelot. These tales shifted focus from heroic warfare to romantic devotion, establishing enduring motifs like the knight-errant and the damsel in distress, while reinforcing gender roles of heroic masculinity and passive femininity.

Shakespeare’s Romeo and Juliet later introduced a tragic counterpoint, mirroring the Eastern focus on doomed love. In 17th-century China, The Plum in the Golden Vase (1610) offered a bold departure, portraying sexuality as integral to daily life and critiquing the corrosive pursuit of wealth and pleasure. Its psychological and social realism led to censorship, yet its literary value endures.

In 18th-century France, Pierre Choderlos de Laclos’ Dangerous Liaisons (1782) exposed aristocratic decadence through an epistolary novel of manipulation and seduction. Stripped of moral pretenses, love becomes a calculated contest. Like Genji, it explores the pursuit of elusive desire, but within a framework of power and conquest, revealing gendered strategies in the dance of attraction.

Across cultures, these works reflect the evolving nature of love—idealized, tragic, sensual, or cynical—while revealing shared human yearnings shaped by time, society, and psychology.

Jane Austen (1775–1817) refined the English novel with six works exploring romantic courtship within the constraints of class, wealth, and gender. In Pride and Prejudice (1813), Elizabeth Bennet’s wit and critical eye collide with Mr. Darcy’s pride, echoing a broader social truth: women, like females in nature, are the discerning sex, forced to judge suitors amid a world of misleading signals. Austen’s novels, often formulaic in structure, probe deep psychological terrain, illustrating how mating rituals are shaped by status, emotion, and perception. Beneath the romance lies a realist’s acknowledgment of the role money plays in marriage—where affection must often bow to economic necessity.

As literature evolved, themes of death gave way to war, and war to sex—now followed, perhaps, by humor. Comedy, ancient and enduring, reflects humanity's resilience. The oldest known joke, from Sumeria, and the god Dionysus of Greece both attest to laughter's primal roots. Greek playwrights like Aristophanes turned comedy into political and social critique, a tradition later inherited by the Roman author Apuleius.

In The Golden Ass (2nd century CE), Apuleius presents Lucius, a man transformed into a donkey after dabbling in magic. His misadventures—mocked, exploited, and nearly slaughtered—satirize human folly. Eventually redeemed by the goddess Isis and restored to human form, Lucius's transformation reflects both spiritual awakening and psychological survival. As a proto-novel, The Golden Ass interlaces comedy with moral and philosophical insight, foreshadowing later picaresque narratives.

In Renaissance France, François Rabelais’s Gargantua and Pantagruel (1532–1564) offered grotesque, satirical adventures of two giants, blending erudition with bawdy humor. Rabelais's irreverence—mocking religion, education, and bodily functions—championed laughter as a tool of human courage and defiance. His work bridged medieval carnivalesque literature with the emerging modern novel, inspiring generations of writers across Europe.

Miguel de Cervantes synthesized these traditions in Don Quixote (1605, 1615), widely regarded as the first modern novel. Alonso Quixano, a deluded reader of romances, reinvents himself as Don Quixote, seeking knightly glory in a disenchanted world. Accompanied by the grounded Sancho Panza, Quixote turns windmills into giants and inns into castles, acting out tales that shaped his consciousness.

Cervantes, drawing on his own hardships, crafts a narrative that blends tragedy with absurdity. In Part Two, Don Quixote gains self-awareness, realizing the pain his fantasies have caused. Yet Cervantes suggests that humans are driven by the stories they absorb—archetypal scripts embedded in memory, emotion, and culture. Like Lucius, Don Quixote lives through delusion, suffers for it, and emerges altered—if not victorious, then redeemed.

These writers—Austen, Apuleius, Rabelais, and Cervantes—chart a literary evolution from courtship to comedy, from social realism to metafiction. Each reveals how storytelling reflects and shapes the human condition: our need for love, our appetite for laughter, and our endless search for meaning amid the absurd.

In the 1760s, Laurence Sterne’s Tristram Shandy emerged as a radical challenge to Enlightenment rationality. A clergyman by trade, Sterne mocked the Age of Reason with a novel defined by digressions, absurdity, and fragmented narrative. Its protagonist, Tristram, never quite arrives at telling his own story, constantly interrupted by accidents, tangents, and the misadventures of those around him.

Tristram’s life begins in chaos: his conception is interrupted, his nose is crushed at birth, his name is botched, and a window accident results in a botched circumcision. These mishaps underscore Sterne’s core message: life is ruled not by reason, but by chance. His father, Walter, obsessed with rational systems, fails at every turn to impose order on the unpredictable.

Parallel to Tristram’s misadventures is the subplot of Uncle Toby, a wounded veteran whose romantic and military obsessions converge in a comical attempt to woo a widow by reenacting battles in his backyard. His failures in both war and love reinforce Sterne’s view of human ambition as fundamentally flawed and comic.

The novel’s structure mirrors its theme: fragmented, recursive, and resistant to linearity. Sterne suggests that storytelling itself must reflect the unpredictability of life—a chaos no rational system can contain. In this way, Tristram Shandy becomes a philosophical comedy, mocking both the human desire for meaning and the Enlightenment’s confidence in reason.

This spirit found a powerful echo in Brazilian literature with Machado de Assis’s The Posthumous Memoirs of Brás Cubas (1881). Inspired by Sterne, Assis tells the story of a man from beyond the grave, who, freed from the burdens of life, recounts his failures with sardonic humor. From this posthumous vantage point, Brás Cubas reflects on mortality, desire, and vanity, suggesting that all human striving ends in the same oblivion—fertilizer for the worms.

Assis, like Sterne, sees comedy as a response to the absurd. Death equalizes all, rendering the struggles of kings and commoners alike laughable in retrospect. Life, viewed from the outside, is a blend of death, violence, sex, and laughter—the elemental forces that shape human stories.

From ancient superstition to modern rationalism, humanity has sought to explain these forces. But with the Enlightenment came a shift: gods gave way to reason, and science became the lens through which we examined nature, mind, and society. The Age of Rationality—rising in 15th- and 16th-century Europe—replaced divine mystery with systematic explanation.

Yet Tristram Shandy and Brás Cubas remind us that rational systems cannot fully contain the human experience. Misunderstandings, accidents, and delusions persist, even under the banner of reason. Comedy becomes the medium through which we confront these inconsistencies, embracing the disorder beneath the surface of order.

In this evolution of literature, we trace a movement from tragedy and epic toward the comic and absurd—not to diminish the seriousness of life, but to acknowledge its unpredictability. Through laughter, Sterne and Assis affirm a deeper truth: that amidst chaos and mortality, the human spirit endures—digressing, stumbling, and laughing all the way.

In the early 17th century, Shakespeare’s Hamlet dramatized the inner turmoil of a prince navigating a court rife with political intrigue. Unlike Don Quixote, whose passion blinds him to reality, Hamlet embodies the rational mind—calculating, skeptical, and paralyzed by choice. His famous soliloquy, “To be or not to be,” captures the existential burden of modernity: the anxiety that comes with freedom and responsibility. As Søren Kierkegaard later observed, such anxiety is the price of rational agency.

Shakespeare's play heralded a shift in literature toward characters who operate through strategic reasoning rather than passion or instinct. Hamlet feigns madness to trap his uncle, yet his elaborate schemes ultimately collapse into tragedy—revealing both the power and limits of rational thought. This marks a pivotal turn in Western literature, where rationality, not fate or divine will, becomes the primary force shaping human action.

Just decades later, René Descartes would declare, “I think, therefore I am,” defining a new philosophical foundation rooted in reason. In this age, the rational subject emerges as both conqueror and sufferer, seeking control over self and world while burdened by internal doubt.

Daniel Defoe’s Robinson Crusoe (1719) embodies this ideal of rational self-mastery. Stranded on a deserted island, Crusoe survives through reason, labor, and order—becoming a symbol of Enlightenment individualism and colonial ambition. His story mirrors the rise of economic autonomy in Europe, where one’s fate is no longer dictated by birth, but by enterprise and personal discipline. The solitary castaway becomes the sovereign of his own world.

This ethos fueled the European expansion that gave rise to settler societies in the Americas and Australasia. The promise of freedom and self-determination lured many, but the pursuit of happiness often fell short, leaving individuals to confront the deeper paradox of autonomy: the struggle for meaning in isolation.

Voltaire’s Candide (1759) responded to this rationalist optimism with sharp satire. A disciple of Enlightenment reason, Candide begins his journey convinced that all is for the best. But through war, disaster, and disillusionment, he comes to see the limits of philosophy when confronted with human suffering. His final conclusion—“we must cultivate our garden”—calls for grounded action over abstract speculation. Voltaire replaces metaphysical answers with personal responsibility, offering a secular wisdom for a world no longer governed by divine certainties.

Together, Hamlet, Robinson Crusoe, and Candide trace the evolution of the rational self. From the anxious thinker to the pragmatic survivor to the disillusioned realist, these figures reflect the Enlightenment’s central tension: the triumph of reason and its confrontation with human limitation. As Western man sought mastery over nature and fate, he discovered instead the complexities of freedom, the fragility of meaning, and the enduring need to create purpose amidst the chaos of existence.

In Frankenstein (1818), Mary Shelley warns of the dangers inherent in the unchecked pursuit of scientific knowledge. Victor Frankenstein, a brilliant young scientist, creates life through rational experimentation but recoils in horror from the monstrous being he brings into existence. Abandoned and reviled, the creature turns against its maker, exposing the ethical void that can emerge when human ambition outpaces moral responsibility. Subtitled The Modern Prometheus, Shelley’s novel parallels the Greek myth of hubris and punishment, suggesting that reason, untempered by compassion, can yield monstrous outcomes.

Shelley’s critique reflects Enlightenment anxieties: the dream of mastery over nature collides with the unforeseen consequences of that control. As Francisco Goya warned in The Sleep of Reason Produces Monsters (1799), rationality without conscience may conjure horrors rather than progress. In Frankenstein, the birth of a man-made being becomes an allegory for the modern condition—an age in which technological power often exceeds our ability to wield it wisely.

This theme recurs in Herman Melville’s Moby-Dick (1851), where Captain Ahab's obsessive pursuit of the white whale mirrors humanity’s relentless drive to dominate the natural world. Ahab’s quest leads to destruction, underscoring the self-destructive potential of pride and overreach. Both Melville and Shelley depict the Enlightenment’s shadow: a world where the promise of reason can degenerate into madness, and where domination of nature risks turning against the dominator.

As industrialization transformed cities and societies, literature turned to the psychological and social consequences of modernity. In Great Expectations (1861), Charles Dickens examines how industrial capitalism stirs both ambition and alienation. Pip, a poor orphan, dreams of rising in society but finds disillusionment at the summit. His benefactor, once a criminal now wealthy through colonial labor, reveals the ambiguous morality underpinning Pip’s advancement. Dickens captures the emotional coldness of modern life—its wealth accompanied by social isolation and moral confusion—yet still affirms the possibility of growth through humility and perseverance.

While Dickens focuses on class, George Eliot addresses gender. In Middlemarch (1871–72), she explores the limitations imposed on women within a patriarchal order. Her protagonist, Dorothea Brooke, seeks purpose and intellectual fulfillment beyond marriage and domesticity. Eliot presents modernity as a moment of awakening: a slow but irreversible shift in consciousness where women begin to claim their own destinies. Her work anticipates the broader feminist movement, portraying women not as passive subjects but as moral and intellectual agents.

Together, these authors trace the evolution of the modern self—ambitious, anxious, and increasingly aware of its moral responsibilities. Shelley and Melville issue warnings against the hubris of scientific and imperial overreach. Dickens and Eliot turn inward, examining how modern structures shape individual aspiration and identity. Reason, they suggest, is neither savior nor villain but a force to be guided. Its power demands ethical reflection, lest it produce monsters—both literal and figurative.

Franz Kafka’s The Metamorphosis (1915) presents the tragic descent of Gregor Samsa, a man who awakens to find himself transformed into an insect. Once the sole provider for his family, Gregor’s new form renders him useless, provoking revulsion from his employer, his parents, and eventually even his sister. No longer seen as a person, he is reduced to a burden—an expendable object in a world that values individuals solely by their economic function. Starved and abandoned, he dies quietly, and his family, unshackled by his death, begins anew. Kafka’s fable exposes the brutal logic of modernity: the worth of a human being measured not by dignity, but by productivity.

This vision reflects Kafka’s own anxieties—frailty, obligation, alienation—and critiques a society enthralled by reason and efficiency. In such a world, identity becomes synonymous with function. Rationalism, once a promise of liberation, becomes a cold mechanism of exclusion, indifferent to suffering. Kafka captures the paradox of the modern age: the more humanity exalts reason, the more it risks erasing the irrational, emotional, and sacred elements that define human life.

Against this mechanistic order, the Romantic movement arose as a rebellion. As industrialization darkened the cities of Europe, Romantic thinkers and artists turned back to nature, emotion, and the imagination. They sought to restore the Dionysian spirit—ecstatic, chaotic, alive—which Enlightenment rationality had suppressed in favor of Apollonian order and control. In literature, the hero transformed from a rational schemer into a passionate soul at war with the modern world.

Jean-Jacques Rousseau foresaw this in Discourse on the Arts and Sciences (1750), warning that reason, left unchecked, would deaden the soul. The German Sturm und Drang movement soon followed, celebrating unrestrained emotion and genius unconstrained by convention. In Britain, poets like Wordsworth, Coleridge, and Byron embraced this ethos, fleeing industrial life and elevating personal expression over rigid rationalism.

Johann Wolfgang von Goethe’s The Sorrows of Young Werther (1774) crystallized this Romantic ideal. Werther, a sensitive artist, is destroyed by unrequited love—his suffering emblematic of a world where passion collides with social constraint. The novel’s impact was immense, inspiring a generation of melancholic youth and signaling a shift toward the celebration of the individual’s inner life.

Goethe's later masterpiece Faust explores the cost of this shift. In his pact with Mephistopheles, Faust trades his soul for pleasure and knowledge, mirroring modernity’s bargain: material mastery at the expense of meaning. The result is comfort devoid of spirit—a critique echoed by Nietzsche and Schopenhauer, who warned that modern reason had severed humanity from its primal depths.

Friedrich Schiller, Goethe’s contemporary, gave Romanticism one of its darkest expressions in The Robbers (1782). The play contrasts two brothers: Karl, the noble idealist turned outlaw, and Franz, the schemer who usurps him. Betrayal turns Karl’s virtue into vengeance, and he becomes a rebel consumed by rage. Schiller’s drama reveals the Romantic hero undone by a corrupt world—an anguished soul lashing out against a society that rewards cunning over honor.

In all these works—from Kafka’s insect to Schiller’s outlaw—the same theme recurs: reason, once a beacon of human advancement, becomes a force of alienation when stripped of empathy and imagination. Romanticism arose not to reject reason outright, but to restore balance—to remind the modern world that the soul cannot be measured by utility alone.

As Romanticism swept eastward into Russia, it ignited a literary tradition steeped in passion, nature, and existential longing. At its forefront stood Alexander Pushkin, whose Eugene Onegin (1837) defined the Russian Romantic hero: disillusioned, urbane, and emotionally estranged. Eugene, shaped by St. Petersburg’s rationalist ethos, rejects Tatiana’s sincere love. Years later, transformed by time and regret, he realizes too late the depth of what he spurned. Tatiana, now poised and married, refuses him. Pushkin reveals that beneath modern detachment, the primal force of love endures—accessible only to those rooted in the natural, emotional world.

Pushkin’s early death in a duel passed the Romantic torch to Mikhail Lermontov, whose A Hero of Our Time (1840) introduced Pechorin, a brooding anti-hero driven by restless ambition and existential contradiction. Like Onegin, he is both analytical and impulsive, drawn to love yet incapable of sustaining it. Lermontov reimagines the Romantic hero as a wanderer shaped by inner conflict, whose encounters with women and nature reflect the instability of a modernizing world.

In both Pushkin and Lermontov, nature is not mere scenery but a spiritual force—a contrast to the rational sterility of city life. Their heroes live in tension between intellect and instinct, reason and passion, evoking Romanticism’s central belief: that truth lies not in logic but in the heart’s unpredictable depths.

This tension crosses into England, where Emily Brontë’s Wuthering Heights (1847) presents Heathcliff, a Romantic figure consumed by unrelenting love and revenge. Raised as an outsider and rejected by society, Heathcliff’s passion for Catherine becomes both salvation and curse. Her marriage to another for status ignites a life of vengeance, yet their love transcends even death. Brontë’s Gothic landscape mirrors the emotional storm within—untamed, elemental, and hostile to industrial order.

Thomas Hardy, writing in the wake of Romanticism, offers a more tragic vision. In Tess of the D’Urbervilles (1891), Tess embodies innocence crushed by social hypocrisy and industrial modernity. Raped by Alec D’Urberville and later abandoned by her husband Angel Clare, Tess’s life is shaped by forces beyond her control. In a final act of despair and justice, she kills Alec and flees with Angel to Stonehenge—an ancient sanctuary untouched by time. But even this primal refuge cannot save her; she is captured and executed. Hardy mourns the loss of purity in a world governed by money, power, and mechanization, casting modernity as a violator of both nature and soul.

Across Russia and England, Romantic literature reveals the enduring struggle between passion and reason, nature and progress. Its heroes—Onegin, Pechorin, Heathcliff, Tess—live at the edge of their worlds, unable to reconcile the rational order with the wildness within. Romanticism, in its many forms, reminds us that the human heart resists reduction, and that beneath every system lies the eternal cry for meaning, love, and freedom.

The Enlightenment, exalting reason and scientific mastery, sought to subdue nature and impose order on the wilderness. In defiance, the Romantics turned to nature as sanctuary—a symbol of freedom beyond the reach of machines and cities. Their art resisted the mechanization of life, elevating passion, intuition, and the sublime. Yet their dream could not escape reality: the wild, though pure, could not sustain life. As necessity prevailed, people returned to cities, and literature followed. Thus, the age of realism began—a sober mirror to the world as it was.

By the 1830s, the Romantic hero gave way to the common man. After Napoleon’s fall, Europe longed not for grandeur but stability. Literature turned inward, shifting from mythic quests to domestic truths, from legend to journalism. Novels began to reflect the rise of empirical science and industrial society, probing ordinary lives shaped by class, ambition, and disappointment.

A pioneer of realism, Stendhal (Marie-Henri Beyle) unveiled The Red and the Black (1830), the story of Julien Sorel, a young man of humble origins, desperate to rise in post-Napoleonic France. Julien’s aspirations—fueled by idealism and ambition—collide with rigid class structures. Rejected in love and society, he turns violent and is imprisoned. The novel exposes the illusion of meritocracy in a society still ruled by aristocratic privilege. Love, too, appears as an unreachable ideal—desired most when withheld. Stendhal’s vision is clear: equality is promised, but rarely delivered.

Honoré de Balzac, father of French realism, expanded this vision. In The Human Comedy, a series of ninety works, he dissected Parisian society with forensic precision. Père Goriot (1835) portrays three men: a self-sacrificing father, a cynical criminal, and an ambitious youth, Eugène de Rastignac, whose journey through the city reveals its brutal truths. Beneath the surface of love, loyalty, and ambition lies a system driven by greed and indifference. Balzac portrays a world where virtue is rarely rewarded, and all efforts risk absurdity in the face of fate.

Gustave Flaubert’s Madame Bovary (1856) distilled this tragic vision. Emma Bovary, trapped in provincial life and a dull marriage, chases romance through adultery and extravagance. Her fantasies, shaped by sentimental novels, crumble under the weight of reality. Flaubert’s unflinching realism shocked his contemporaries, earning him a trial for obscenity. Yet his critique endures: desire, unchecked by reality, leads not to liberation but ruin. Emma’s suicide is not just personal—it’s cultural, a symbol of the fatal clash between fantasy and fact.

As France reckoned with modernity, Russia entered its own era of upheaval. The 1861 emancipation of 31 million serfs catalyzed a new literary tradition, one deeply attuned to the tension between reform and tradition. Russian realism emerged as a spiritual counterpart to the West, blending psychological depth with social critique.

Ivan Turgenev, the most Western of Russia’s novelists, brought elegant restraint to the Russian novel. In Fathers and Sons (1862), he introduces Bazarov, a young nihilist scorning all traditions. His rebellion against the old world falters when he falls in love, revealing a crack in his hardened philosophy. Wounded in a duel and softened by suffering, Bazarov’s transformation embodies the central paradox of realism: that ideology collapses before life’s complexity. Turgenev foresaw the currents that would lead to revolution, warning that reason alone could not sustain the human soul.

From France to Russia, realism replaced Romanticism’s dreams with the sober clarity of lived experience. Its heroes are not mythic warriors but flawed individuals—Julien, Emma, Rastignac, Bazarov—struggling within a world ruled not by beauty or idealism, but by necessity, class, and chance. These writers, forsaking fantasy, gave literature its most enduring mirror: one that reflects not who we wish to be, but who we are.

No realist casts a longer shadow than Fyodor Dostoevsky (1821–1881), whose psychological depth redefined the novel. In Crime and Punishment (1866), Dostoevsky probes the disintegration of moral certainty in modern Russia. His protagonist, Raskolnikov, a destitute student, murders a pawnbroker, convinced by utilitarian and Nietzschean ideas that the act is justified. He imagines himself among the "extraordinary" men—those above conventional morality. But guilt corrodes his rationalizations. Through Raskolnikov’s unraveling, Dostoevsky exposes the spiritual consequences of ideology severed from conscience: no system, no logic can erase human responsibility.

While Dostoevsky looked inward to the soul’s anguish, Leo Tolstoy (1828–1910) turned outward, chronicling the lives of individuals as shaped by history. In War and Peace (1869), Tolstoy renders the Napoleonic Wars not as the product of “great men” but as the unfolding of countless unseen causes. His central character, Pierre, drifts through battlefields and salons, swept along by historical forces beyond his control. Napoleon himself is portrayed not as a master of destiny, but as its instrument. In this vision, agency is an illusion; history is a tide no one commands.

Tolstoy’s Anna Karenina (1878) narrows this lens to domestic life. Anna, stifled by her marriage to a bureaucrat, is seduced by romantic passion and modern ideals of fulfillment. Her tragic fate—foreshadowed by the train that both introduces and ultimately kills her—becomes a symbol of the new age: swift, efficient, but spiritually disorienting. Tolstoy critiques modernity’s promises and reveals how its technologies, like the railway, reshape not just life’s logistics but its expectations.

Together, Dostoevsky and Tolstoy represent the two poles of Russian realism: the inner world of conscience and guilt, and the outer world of historical and social determinism. While Dostoevsky insists on personal moral reckoning, Tolstoy shows how individuals are embedded in the vast, impersonal sweep of time.

By the late 19th century, realism gave rise to naturalism—a movement informed by evolutionary theory and a growing sociological consciousness. Inspired by Darwin’s On the Origin of Species (1859), naturalists viewed human behavior as a product of heredity, environment, and historical forces. Literature began to investigate not only how people lived, but why, tracing actions to underlying biological or societal structures.

Tolstoy's own work anticipates this shift. In War and Peace, he denies the autonomy of historical actors, portraying figures like Napoleon as shaped more than shaping. His narrative emphasizes the collective over the individual, aligning with early sociological and proto-socialist thought. The unnamed masses—peasants, soldiers, servants—are not background noise; they are the true substance of history.

This vision challenges the myth of the “Great Man.” Even the most powerful leaders are portrayed as caught in a web of inherited circumstances and communal movements. As Pierre’s captivity and near-death experiences show, survival often depends less on will than on chance and solidarity. Fate, not force, governs life.

The French realists—Stendhal, Balzac, Flaubert—revealed the moral and social contours of modern life. Their Russian counterparts—Turgenev, Dostoevsky, Tolstoy—deepened the form, balancing the psychological, the social, and the historical. While Turgenev offered a restrained realism shaped by European elegance, Dostoevsky plunged into the abyss of moral reckoning, and Tolstoy expanded the novel into a vast historical inquiry. Together, they charted the course from moral realism to historical determinism, preparing the ground for naturalism’s entrance.

In this next phase, literature would examine not just how humans act, but what drives them—heredity, class, instinct, and social structure. Realism had reflected the surface of life; naturalism now sought its roots.

Émile Zola (1840–1902), the leading figure of literary naturalism, combined realism with psychological depth and sociological vision. His 1885 novel Germinal portrays the brutal life of French coal miners through the story of Étienne Lantier, a young idealist drawn to socialism. As workers strike for better conditions, they meet violent repression. Étienne’s failed rebellion, ending in defeat and dismissal, symbolizes the wider struggle between labor and capital. Like a seed, revolution may germinate underground, but Zola emphasizes how systemic forces—biological, social, and economic—shape and constrain individual will.

Zola’s vision aligns with socialist determinism, akin to Marx’s theory that capitalism would inevitably collapse under its own contradictions. Yet, naturalism is more than ideology—it draws on Darwinian biology, seeing humanity as subject to the same evolutionary forces that govern nature: competition, adaptation, survival. Attempts to engineer a perfectly egalitarian society, Zola suggests, must reckon with nature’s indifference to equality.

From France, the naturalist lens shifted northward to Scandinavia, where August Strindberg’s The Red Room (1879) explored the tension between artistic idealism and social conformity. Arvid Falk, disillusioned by bureaucratic drudgery and family betrayal, joins a circle of impoverished artists who meet in a restaurant’s crimson-lit room. Though initially defiant, they too succumb to compromise, poverty, and suicide. Arvid’s return to society—through marriage and a teaching job—marks the triumph of reality over revolt.

Strindberg paints society as a Darwinian battlefield, where even revolutionaries are drawn back into the gravitational pull of order, hierarchy, and survival. The desire for change is strong, but biology—favoring stability and self-preservation—often prevails. In this light, socialism must contend not only with systems of power but with human nature itself.

Across the Atlantic, Jack London (1876–1916) joined this tradition with The Call of the Wild (1903), a short novel tracing the regression of Buck, a domesticated dog, into his primal, wolf-like nature amid the frozen landscapes of the Yukon. As environmental pressures intensify, Buck sheds the veneer of civilization. London suggests that beneath society’s structure lies a dormant instinct ready to awaken in times of crisis. Civilization may nurture altruism, but in extremis, it is survival, not virtue, that rises to the surface.

London’s work echoes themes found in Golding’s Lord of the Flies: in the absence of order, the primal returns. Influenced by socialism and Darwinism, London understood human behavior as inseparable from its environment. His portrayal of regression is not pessimism but realism—an acknowledgment of the instincts that lie just beneath civilization’s surface.

Naturalism bridges Darwinian science and literary realism, revealing the unconscious drives behind human action. Where realism depicted society with objectivity, naturalism looked deeper—toward biology, environment, and psychological compulsion. Writers like Zola, Strindberg, and London replaced moral judgment with causal explanation, presenting humans as products of inherited instincts and historical circumstance.

This movement laid the groundwork for modernism, which would later turn inward to explore the psyche. But before Freud, the naturalists had already begun excavating the hidden forces beneath the surface of behavior, showing how evolution and environment govern the arc of human life.

Naturalism, as a literary movement, is grounded in two opposing forces: the biological imperative of survival and the idealism of socialism. It captures the tension between human aspirations for justice and equality, and the animal instincts of self-interest, competition, and power preservation. Though we champion liberty and fairness, our evolutionary inheritance often undermines these ideals. As George Orwell’s Animal Farm illustrates, revolutions may begin in pursuit of freedom, only to reproduce the same hierarchies they sought to destroy.

This disillusionment led writers to explore psychology, seeking to understand the contradictions within human nature—our benevolence in security and cruelty in adversity. From this inquiry, modernism emerged, turning inward to explore the unconscious mind. Sigmund Freud’s “talking cure” revolutionized literature, enabling characters to express suppressed desires without structural constraints. This gave rise to stream of consciousness, a narrative form influenced by William James, who described consciousness not as segmented rooms but as a fluid stream, ever-shifting and continuous.

Where naturalists focused on social systems, modernists illuminated inner life. Between 1910 and 1930, literature shifted its gaze from collective movements to private experience, probing the subjective, fragmented psyche. This modernist impulse first appeared in Fyodor Dostoevsky’s Notes from Underground (1864), where a reclusive narrator recounts his humiliation, pettiness, and self-loathing from a literal and metaphorical basement. Embittered by his own impotence and society’s indifference, he lashes out at others—most notably Liza, a prostitute—before collapsing into deeper isolation. Dostoevsky’s antihero, ruled not by ideology but by inner contradictions, stands as the prototype for the modernist protagonist.

This interior descent continues in Knut Hamsun’s Hunger (1890), widely regarded as the first fully modernist novel. Its unnamed narrator, a starving writer wandering Oslo, spirals into madness and absurdity—chewing on his pencil, refusing aid out of pride, and imagining his dissolution into a sea of “dark monsters.” His ordeal, devoid of plot or resolution, mirrors the fragmented and hallucinatory nature of consciousness itself. Here, the Cartesian axiom “I think, therefore I am” takes on a harrowing literalness: thought is all that remains.

Against this backdrop of despair, Marcel Proust offers a redemptive counterpoint. In In Search of Lost Time (1913–1927), the narrator experiences a sudden flood of memory triggered by tea and cake. These involuntary memories, prompted by sensory impressions, resurrect lost time and past selves. Proust reveals that while time erodes our identity, memory—especially when unbidden—can restore it, if only briefly. Yet because such moments are fleeting, Proust turns to art as the only true refuge: a means of preserving emotion, experience, and meaning beyond the reach of time’s decay.

Knut Hamsun’s Hunger (1890), set in Kristiania (now Oslo), is widely regarded as the first modernist novel. It follows an unnamed writer descending into madness and starvation, wandering the city in a fog of hunger, hallucination, and existential despair. His pride prevents him from accepting help, and his internal turmoil reflects the psychological isolation emblematic of modern life.

In contrast, Marcel Proust’s In Search of Lost Time (1913–1927) transforms existential pessimism into artistic transcendence. Triggered by the taste of a madeleine, the protagonist Marcel relives forgotten memories through involuntary sensations. Proust reveals time as humanity’s great adversary, yet suggests that memory—and ultimately art—can resist its erasure. Through this, beauty becomes not external but internal: memory infused in objects, places, and sensations, made immortal through narrative.

James Joyce’s Ulysses (1922) compresses the structure of Homer’s Odyssey into a single day in Dublin. The novel follows Leopold Bloom, Stephen Dedalus, and Molly Bloom through a kaleidoscope of shifting styles, culminating in a stream-of-consciousness finale. Joyce captures time as experienced subjectively—fragmented, nonlinear, and fluid—echoing William James’ psychological model. His technique unveils the subconscious and gives voice to desires normally censored by social norms.

Thomas Mann’s The Magic Mountain (1924) shifts from the freedom of Joyce’s Dublin to the confines of a Swiss sanatorium. There, time slows, and characters face philosophical questions amid physical illness and existential suspension. Like Kafka’s protagonists, Mann’s figures are trapped—both in space and in themselves—reflecting the paralysis of a Europe on the brink of catastrophe.

In The Magic Mountain, Thomas Mann uses the setting of a Swiss sanatorium to mirror the psychological and ideological illnesses afflicting early 20th-century Europe. The novel presents a philosophical confrontation between humanism, socialism, and Romanticism, reflecting the continent's fractured condition in the wake of war. As realism depicted society through empirical observation and naturalism drew on evolutionary theory, modernism turned inward, exploring the complexities of consciousness.

Emerging from this shift is magical realism, a genre that fuses the mundane with the extraordinary, reviving ancient traditions where magic and reality were inseparable. Rooted in religious myth, magical realism gained new vitality in the 20th century, influenced by the uncertainty of quantum physics and the psychological depths uncovered by Freud and Jung. Like dreams, these narratives blur boundaries between inner and outer worlds, re-enchanting modern experience.

Mikhail Bulgakov’s The Master and Margarita, written in the 1930s and published posthumously in the 1960s, is a foundational work of magical realism. Under Stalin’s regime, Bulgakov evaded censorship by embedding social critique within fantastical narratives. The novel centers on Satan’s surreal visit to Moscow and follows the lives of the Master, a disillusioned writer, and Margarita, his passionate lover who bargains with the devil to save him.

Their story reflects the clash between creative freedom and political oppression. The fantastical chaos Satan unleashes evokes the turmoil of Soviet life, while the Master’s despair and Margarita’s defiant love embody the human struggle for meaning and transcendence. Rich with allegory, satire, and religious symbolism, Bulgakov’s novel became a precursor to the South American magical realism that would soon follow, blending political commentary with metaphysical wonder.

Together, these works mark modernism’s turn inward: from naturalism’s external realities to the interiority of thought, memory, and consciousness.

From the religious enchantments of The Master and Margarita, magical realism shifts toward the opium-laced visions of The Blind Owl (1936), a landmark in Persian literature by Sadeq Hedayat. Through the fevered mind of a tormented painter, haunted by a woman’s piercing gaze and driven by obsession and shame, Hedayat explores the collapse of identity, the boundaries of reality, and the madness of the self. Influenced by Freud, Jung, and the Tibetan Book of the Dead, death becomes a central force—both tormentor and liberator—while opium offers a fragile escape from existential despair.

The narrative blurs past and present, life and death, in a cyclical structure where characters reappear in shifting forms. The painter’s failed attempts to immortalize his beloved in art reflect the futility of creation against time’s erasure. His journey unfolds as both confession and hallucination, with recurring figures like the mocking old man and the silent, dead wife echoing Dostoevskian and Poe-like motifs. Through this, Hedayat crafts a dreamlike meditation on mortality and the fractured psyche, where reality dissolves into illusion.

A parallel vision appears in Juan Rulfo’s Pedro Páramo (1955), a foundational Latin American novel of magical realism. Juan Preciado, seeking his estranged father, arrives in the ghost town of Comala, only to find it populated by the dead. Through disembodied voices and fragmented memories, the life of Pedro Páramo—a ruthless patriarch destroyed by obsession—is gradually revealed. The novel unfolds like a séance, its chorus of spectral narrators constructing a collective memory of corruption, grief, and decay.

Rulfo abandons traditional structure, instead presenting a dreamscape where the boundaries of speaker, time, and self are fluid. The novel captures the death of a town and its people in lyrical fragments, echoing Mexico’s revolutionary history and existential fatalism. Like Hedayat, Rulfo fuses myth, memory, and mortality into a poetic vision of human fragility.

From Mexico’s spectral deserts, the narrative shifts to Europe in Günter Grass’s The Tin Drum (1959), where the child-protagonist Oskar Matzerath, confined in an asylum, recounts his life in Nazi-era Poland. Refusing to grow beyond the age of three, Oskar rejects adulthood, which he equates with war, conformity, and moral compromise. Possessing a voice that can shatter glass and a willful control over his physical growth, he becomes both witness and participant in Germany’s descent into madness.

Oskar’s tin drum, a symbol of protest and memory, accompanies him through the chaos of war and into postwar celebrity. Yet beneath his success lies guilt—for inciting violence, for surviving when others perished, for bearing witness to atrocity while remaining complicit. Grass, a former soldier himself, uses Oskar to explore the psychic wounds of a generation, blending grotesque satire with surreal detail to expose the moral collapse of modern history.

In The Tin Drum, Günter Grass presents music as a mystical force rivaling storytelling in its power to transcend reality. Yet for Oskar, its magic becomes a burden; though he achieves fame through his drumming, he is haunted by guilt for having incited violence. His self-imposed punishment—a murder confession leading to a life sentence—becomes a metaphor for the generational guilt carried by those who survived war.

Oskar’s narrative blends fantasy, magical realism, and moral ambiguity. As both Christ-like and diabolical, he embodies the unresolved tension between good and evil. His unreliable voice mirrors the pain of a fractured postwar psyche, where justice and sin are no longer clearly defined.

This reflection on memory and morality leads naturally to Gabriel García Márquez’s One Hundred Years of Solitude (1967), a cornerstone of magical realism. Spanning seven generations of the Buendía family in the mythical Colombian town of Macondo, the novel traces the arc from utopia to decay. José Arcadio Buendía’s dream of a city of mirrors gives birth to Macondo—a paradise that gradually succumbs to civil war, capitalism, insomnia, and ruin.

The novel’s surreal events, including a plague of wakefulness and a town consumed by ants, serve as metaphors for modernity’s fragmentation. The Buendías’ decline parallels humanity’s fall from innocence, as each generation inherits cycles of violence, desire, and despair. Time bends and folds, echoing the loneliness that defines human consciousness—our yearning for connection in a world that isolates us, even among others. This solitude culminates in the death of God, leaving modern man marooned in a crowd.

The theme of existential isolation continues in the works of Haruki Murakami. In Kafka on the Shore (2005), Murakami weaves surreal elements—talking cats, raining fish, and mysterious disappearances—into a story of a runaway boy confronting fate and identity. Like Franz Kafka, the protagonist inhabits a world shaped by alienation and paradox.

Murakami’s blend of the fantastical and the mundane evokes the timeless charm of fairy tales, yet his settings are modern and psychologically nuanced. His characters yearn for spontaneity in an increasingly rational world, revealing a deep human need for wonder. In Murakami’s universe, magic is not escape, but revelation—an entry point into emotional truth.

This return to enchantment reflects a deeper shift in modern consciousness. Once banished by science, the magical returns through the uncertainty introduced by quantum physics. As determinism gives way to indeterminacy, the boundaries between fact and fiction, certainty and ambiguity, dissolve.

With this shift, storytelling itself transforms. No longer a vehicle for stable truths, it now mirrors our fragmented view of reality. As we enter the postmodern era, narratives become kaleidoscopic—layered, self-aware, and unresolved—reflecting the complexity of human experience in a world where meaning is no longer fixed.

Thus, the modern story becomes a mirror not of certainty, but of doubt. It challenges us to see truth not as something given, but as something continuously sought. In this fractured narrative landscape, magical realism serves as a bridge—between past and present, reason and mystery, self and world.

Together, Hedayat, Rulfo, and Grass present a vision of magical realism not as fantasy, but as a means of confronting trauma, memory, and mortality—where dream and nightmare merge, and art becomes both a refuge and a reckoning.

Friedrich Nietzsche challenged the foundations of Western thought by declaring the "death of God," arguing that rationalist humanism could not replace the spiritual meaning once provided by religion. In its absence, empirical truths proved insufficient to quell existential despair. Nietzsche proposed that art might offer redemption by restoring meaning beyond reason.

Other philosophers offered alternative responses: for Schopenhauer, music accessed a deeper truth; for Sartre, meaning arose from personal freedom and choice; for Camus, significance emerged through creative defiance in an absurd world. Together, they shifted the focus from universal truth to individual existence.

Nietzsche’s critique also undermined the concept of a singular truth, laying the foundation for postmodernism, which rejected the supremacy of Western narratives. As post-colonial voices gained prominence amid global decolonization, literature opened to diverse perspectives that challenged Eurocentric worldviews.

Nietzsche’s suspicion of a coherent self influenced existentialists like Sartre and Camus, who portrayed identity as fragmented and self-made. These ideas shaped literature’s turn toward ambiguity and absurdity, as seen in Samuel Beckett’s Waiting for Godot, where time and meaning collapse into existential uncertainty.

By the 21st century, philosophy increasingly questioned anthropocentrism. Posthumanist thought and environmental ethics prompted literature to decenter the human, giving animals and ecosystems narrative agency. In authors like Haruki Murakami, animals are not symbols but co-actors, reflecting a broader critique of human dominance and a call for interspecies empathy.

This shift appears in posthumanist literature, where humans are sometimes depicted as antagonists amid ecological collapse. Literature now interrogates not only human morality but our relationship with the planet, urging a redefinition of ethical coexistence.

Joseph Conrad’s Heart of Darkness (1899) exposed colonial brutality in Africa, yet did so through a European lens. In contrast, Chinua Achebe’s Things Fall Apart (1957) presented an African response to colonialism. Its protagonist, Okonkwo, embodies the tension between tradition and change, honor and violence. His downfall reflects the cultural rupture wrought by colonial intrusion, offering a nuanced portrayal of both indigenous and imperial worlds.

Albert Camus’s The Stranger (1942) explores existential absurdity through Meursault, a man emotionally detached from his mother’s death and later executed for an unpremeditated murder. His trial becomes less about the crime than his indifference, revealing society’s demand for emotional conformity. In prison, Meursault comes to accept life’s inherent meaninglessness—and in that acceptance, finds peace.

Across these works, literature mirrors the philosophical shift from certainty to ambiguity, from anthropocentrism to interdependence. Whether confronting colonialism, existential despair, or ecological crisis, it seeks not final answers but deeper questions about what it means to exist.

Albert Camus, in The Stranger, asserts that happiness arises from accepting life’s absurdity. Meursault’s emotional detachment is not apathy but a quiet rebellion against a society that demands conformity, particularly in expressions of grief. In Camus’s critique, modern justice punishes not only crime but the refusal to feign sentiment, revealing a world where authenticity is criminalized.

This marks a transition from older myths—where women civilize men through love, as in Gilgamesh or Beauty and the Beast—to a modern age where institutions, not romance, tame the individual. In Meursault’s case, it is not love that reforms, but the cold mechanisms of the legal system.

Jorge Luis Borges, in The Library of Babel, explores the paradox of infinite knowledge confined by rational structure. His infinite library contains all possible books, yet understanding it proves futile. Rationality, once the hallmark of Enlightenment progress, now emerges as a limitation—ordering imagination, yet stifling it. The library becomes a metaphor for the modern mind: overwhelmed by possibility, paralyzed by interpretation.

Thomas Pynchon’s Gravity’s Rainbow confronts the myth of progress. Set during World War II, the novel links corporate greed with technological advancement, suggesting that the tools of modernity are also instruments of destruction. Its central symbol, the V-2 rocket, unites physics, paranoia, and death, encapsulating the entropy at the heart of Western history. Pynchon dismantles the illusion that civilization moves toward justice; instead, he portrays a spiral into chaos.

Kurt Vonnegut’s Slaughterhouse-Five offers a postmodern anti-war narrative through the dislocated experiences of a time-traveling soldier. Time loses its linearity; trauma renders memory fragmentary. The randomness of existence—expressed through science fiction and satire—exposes the absurdity of war, which annihilates life’s fragile miracle. Vonnegut reframes storytelling as a survival mechanism, allowing us to process the incomprehensible.

Together, these works reveal a literary shift. Ancient storytelling grappled with nature’s uncertainty—death, war, sex, joy. Modernity, in mastering nature, replaced these uncertainties with man-made systems: science, law, reason. But the very rationality that promised clarity now collapses into doubt. Postmodernism and post-humanism respond by questioning human centrality and embracing ambiguity.

Through Borges’s infinite imagination, Pynchon’s chaotic systems, Camus’s existential revolt, and Vonnegut’s fractured time, literature becomes a mirror of modern disillusionment. Yet in this disillusionment, storytelling remains vital—not to impose meaning, but to endure its absence.

The discovery of fire marked a turning point in human evolution, enabling cooking, freeing time, and fostering reflection. Around the fire, early humans became not just survivors, but thinkers. With this rise in consciousness came awareness of mortality—a uniquely human burden.

To confront death, humans created stories. These early narratives, often religious, promised life beyond death, transforming mortality from an end into a passage. Over time, mythologies emerged—populated by gods, demons, and heroes—providing moral and existential frameworks. Among them, the Epic of Gilgamesh stands out, depicting a hero's failed quest for immortality and his turn instead toward legacy through civilization.

Storytelling thus became a tool for meaning-making, connecting generations and shaping civilizations. As religion gave way to empire, narratives shifted toward conflict—epics of war, valor, and divine judgment that united societies through shared enemies and purpose. Over time, themes of love and comedy entered these stories, reflecting the broader spectrum of human desire and social complexity.

This tradition dominated until the Enlightenment, when reason replaced religion as the guiding principle. Stories began reflecting human mastery over nature, shifting from myth to science, while still grappling with enduring themes: conflict, love, mortality, and meaning.

The Romantic movement reacted to this rationalism, emphasizing emotion, passion, and the dignity of common lives. Realism followed, depicting everyday struggle with unflinching honesty. Darwin’s theories then propelled naturalism—a style that revealed humans as animals shaped by environment and instinct, though often ignoring the depth of inner life.

Modernism responded by turning inward, exploring consciousness and subjective experience. This led to magical realism, which fused realism with dreamlike wonder, reintroducing enchantment to modern life.

In the posthuman era, narratives have expanded beyond the human, encompassing animals, machines, and ecosystems. Literature now questions human centrality, embracing interdependence and ecological awareness.

Looking forward, emerging technologies like AI and virtual reality may reshape storytelling entirely—transforming audiences into participants and blurring the boundary between fiction and reality. Yet through every transformation, storytelling remains our oldest and most enduring response to the mystery of existence.

Storytelling is the foundation of human meaning. Across time, it has shaped our perception, given form to our hopes, and structured our understanding of existence. When our narratives collapse, so too does our sense of purpose.

From ancient myths to modern media, stories have offered frameworks for survival, identity, and aspiration. We tell ourselves that effort brings reward, that love redeems, or that legacy matters—narratives that anchor our motivations and guide our actions. Even our daily lives are shaped by these internal fictions.

As rationality evolved, it never replaced storytelling—it merely complemented it. Rational thought orders the external world; stories give meaning to the internal one. Without narrative coherence, reason alone cannot satisfy the human psyche.

In an age of machines and algorithms, the loss of storytelling would mark the loss of humanity itself. Stories are what separate us from cold logic. They are how we navigate death, desire, and uncertainty.

Media now harnesses this ancient power, shaping collective emotions, fueling division or unity, and redefining truth itself. Like ancient empires, today’s outlets construct heroes and villains, bending reality through narrative control.

As our species evolves, so too do our stories. Science fiction once belonged to fantasy, but now reflects our real anxieties and ambitions—space travel, artificial life, longevity. Yet at the core, our deepest longing remains unchanged: to live fully and meaningfully.

Stories mirror these desires. Myths of heroism, conquest, romance, and utopia reflect our enduring need for connection, triumph, and transcendence. When reality disappoints, we retreat into narrative—finding comfort, clarity, and purpose.

The future of storytelling lies not just in new technology but in timeless needs. Whether told by humans or AI, stories will remain the vessels of our fears, dreams, and search for meaning. Even in a world remade by machines, the human essence will endure through the narratives we tell.

So, as we look ahead, the question is not whether storytelling will survive—but how it will continue to evolve, and in doing so, continue to define what it means to be human.

On Philosophy and the Human Condition

Philosophy is the disciplined pursuit of wisdom—the rational inquiry into existence, morality, and the nature of reality. Rooted in the traditions of Socrates, Plato, and Aristotle, it arose from humanity’s desire to understand the world through reason and dialogue. Though its questions are ancient, philosophy remains vital as it challenges us to think critically, question assumptions, and seek meaning in a changing world.

Humans are guided by three intrinsic faculties: instinct, emotion, and reason. Instinct ensures survival and reproduction; it operates unconsciously, driving action without deliberation. Emotion enriches experience, reflecting internal states and influencing behavior. It motivates, warns, and inspires, shaping our engagement with the world. Reason, however, distinguishes humanity. It allows us to reflect, plan, and transcend immediate impulses. Unlike instinct or emotion, reason is developed through experience and learning. It alone can discipline the other faculties, aligning action with long-term purpose.

These three tools—instinct, emotion, and reason—form the foundation of human nature. Philosophy acts as their interpreter. It teaches us to examine our desires, understand our reactions, and refine our thinking. By doing so, it elevates our existence from mere reaction to intentional living.

The earliest thinkers, prompted by natural phenomena and existential dread, developed myths and metaphysical systems to explain the unknown—especially death. In works like the Epic of Gilgamesh, we see humanity’s first response to mortality: the search for legacy when immortality proved impossible. Religions and belief systems followed, offering comfort through visions of the afterlife.

In modernity, many have turned away from such beliefs, preferring secular interpretations of existence. Yet the questions remain. What is the meaning of life? How should we live? What lies beyond perception?

Philosophy endures because these questions are eternal. It is not a relic of the past, but a compass for the future—illuminating the path with clarity, coherence, and the love of wisdom.

In early human societies, power belonged to the strong. Over time, myth and memory turned leaders into deities, and these gods—first tied to nature—gradually took on human traits. Religious systems emerged to explain life, death, and the cosmos.

Philosophy arose in contrast, seeking truth through reason rather than divinity. It asked two fundamental questions: What exists? and How do we know it? These questions gave rise to ontology (the study of being) and epistemology (the study of knowledge).

Early philosophers explored the physical world, the origins of life, and the workings of the mind. Yet as thought advanced, philosophy birthed specialized disciplines: physics in the 16th century with Galileo and Newton, biology in the 19th with Darwin, and psychology in the 20th with Freud and Jung. As these sciences matured, philosophy ceded its empirical domains and narrowed its focus to metaphysical inquiry.

By the modern era, philosophy appeared sidelined, no longer central to the intellectual landscape it had once shaped. Many philosophers retreated from empirical engagement, leaving physics, biology, and psychology to others. What, then, remains for philosophy?

The answer may lie in intuition—a faculty bridging instinct and reason. Unlike methodical science or mythic belief, intuition offers immediate, non-discursive insight. It links the unconscious depths of psychology with the rational clarity of science.

Henri Bergson championed this vision. He argued that intuition provides a direct experience of reality—fluid, creative, and vital—where reason alone falters. Intuition enlivens, where abstraction often deadens. For Bergson, it was the path back to life’s essence.

Thus, philosophy today must reclaim its vitality—not by reverting to past methods, but by embracing intuition as its instrument. Where science explains, philosophy can illuminate; where psychology interprets, philosophy can synthesize. It should not compete with the sciences, but complete them.

To understand philosophy’s future, one must know its past. We will trace its 2,500-year journey—its terms, its schools, its dichotomies: ontology vs. epistemology, rationalism vs. empiricism, humanism vs. utilitarianism, existentialism vs. postmodernism, egalitarianism vs. elitism.

At its heart, philosophy still asks: What is? and How do we know it? And from these, it continues to ask the most human question of all: How should we live?

Philosophy began with two central inquiries: ontology, the study of existence—what is real—and epistemology, the study of knowledge—how we know what we know.

Ontology examines the nature of being and the kinds of entities that exist. It raises questions about the essence of humanity—whether we are simply animals or possess qualities that set us apart. These distinctions shape moral frameworks and reinforce values such as the sanctity of life. Ontological inquiry laid the foundation for science, as thinkers like Aristotle sought to understand the natural world and humanity’s place within it.

Epistemology explores how knowledge is acquired and the limits of human understanding. Immanuel Kant argued that we do not perceive reality directly; our knowledge is shaped by innate cognitive structures. Michel Foucault extended this, asserting that knowledge is inseparable from power, and that what is accepted as truth often reflects dominant ideological forces rather than objective facts.

Together, ontology and epistemology opened the door to modern science, while also prompting critical reflection on the influence of power over knowledge.